Every day, businesses create millions of images from medical scans, factory cameras, satellite feeds, and online stores. Humans can’t process all this data quickly or consistently.

AI image segmentation helps by breaking images into smaller areas and labeling each one. This allows machines to recognize shapes, edges, and context, almost like a human eye.

The field has evolved rapidly. While earlier segmentation systems relied on convolutional neural networks (CNNs) designed for specific tasks, today's most powerful models are built on Vision Transformer (ViT) architectures.

These foundation models, such as Meta's SAM 3, can handle larger-scale, continuous learning and adapt to new domains far more easily than older approaches.

At Azumo, we work across both established and cutting-edge architectures to build AI image segmentation systems that match each client's requirements.

In this article, we will explain how image segmentation works, the main techniques and types used, the leading models in the field, real-world applications across different industries, and how Azumo uses this technology to create practical, production-ready computer vision solutions.

What is AI Image Segmentation and How Does It Work?

Imagine coloring in a photo, but with purpose. Instead of just drawing a box around an object, AI labels every pixel in the image. This gives machines a complete understanding of what is in a picture and where each part is located.

Image segmentation with AI looks at color, texture, shape, and brightness to divide an image into meaningful regions. Unlike image classification, which gives one label to the whole picture, or object detection, which draws boxes around objects, segmentation provides detailed, pixel-level information.

The process generally involves preparing the image, letting a neural network identify patterns and shapes, and then labeling each pixel. The final output is a color-coded map showing exactly what belongs to each category.

This level of detail makes image segmentation essential for fields like healthcare, manufacturing, and autonomous vehicles, where precision matters most.

What Are The Types of AI Image Segmentation? Semantic vs Instance vs Panoptic Segmentation

Not all image segmentation types work the same way, and the right choice depends on what you need to accomplish. There are three primary approaches, each offering a different level of detail and suited for different use cases.

Now let's look at each one:

Semantic Segmentation

Semantic segmentation assigns a class to every pixel in an image. For example, if a model is trained to recognize roads, trees, and sky, it will label every road pixel as “road,” every tree pixel as “tree,” and so on. The limitation is that it does not distinguish individual objects of the same class. Two cars parked side by side are both labeled “car” without differentiation.

This works well for tasks like understanding a scene, detecting defects in manufacturing, analyzing lanes for autonomous driving, and medical image classification. Popular architectures include FCN, DeepLabV3+, and SegNet. Semantic segmentation is ideal when you need to know what is present but not how many objects there are.

Instance Segmentation

Instance segmentation goes further by identifying each object separately. For example, three people standing together would be labeled as person1, person2, and person3, each with its own pixel mask. The main difference between semantic and instance segmentation is that semantic tells you what is in the scene, while instance tells you which one.

This method requires both object detection and pixel-level masking, so it uses more computing power. Mask R-CNN, developed by Facebook AI Research in 2017, is a leading model for this approach. Use cases include tracking surgical tools, picking products in factories, and analyzing crowds.

It takes more computing power than semantic segmentation, but it is needed when you want to track individual objects.

Panoptic Segmentation

Panoptic segmentation combines the best of semantic and instance segmentation. It labels every pixel with a class and assigns unique IDs to individual objects. This means it can handle “things” like people and cars, as well as “stuff” like sky or grass, in a single map.

Models such as EfficientPS, PanopticFPN, and UPSNet are used for this approach. Panoptic segmentation provides the most complete understanding of a scene, making it ideal for autonomous driving, full-scene parsing, and advanced robotics. The trade-off is that it requires the most computing power of the three types.

Image Segmentation vs. Object Detection vs. Image Classification

These 3 techniques often get confused, so let's clear it up.

- Image classification answers a simple question. It tells you what is in the image by giving one label to the entire picture.

- Object detection adds more detail. It finds different objects in the image and shows where they are by drawing boxes around them.

- Image segmentation goes even further. Instead of drawing boxes, it labels every pixel in the image. This allows the system to show the exact shape and boundaries of each object.

We can explain this difference with a simple example. If you only need to know whether a product has a defect, image classification may be enough. If you need to know where the defect appears on the product, object detection is more useful.

But if you want to understand the exact size and shape of the defect, image segmentation techniques are the best option. They give machines a much deeper understanding of what they see.

What Are The Pros and Cons of AI Image Segmentation?

Like any technology, AI image segmentation has both advantages and challenges. Understanding these trade-offs helps businesses decide when and how to use it.

One of the biggest challenges is data labeling. Because every pixel must be marked, annotating a single image can take between 10 and 30 minutes when scenes are complex. This effort grows quickly when thousands of images are needed. New research is helping reduce this burden.

A study published in Nature Communications on the GenSeg framework showed that some modern methods can improve accuracy by 10 to 20 percent while using 8 to 20 times less training data in medical imaging tasks. New foundation models such as Segment Anything (SAM) are also helping by allowing segmentation with little or no task-specific training data.

Computing cost is another factor to consider. Training large segmentation models often requires GPU infrastructure, and running them on high-resolution images can demand careful optimization.

With the right model choice, data strategy, and system design, these challenges can be managed effectively. This is where working with an experienced development partner can make a real difference.

What Are the Real-World Use Cases of AI Image Segmentation?

AI image segmentation is already used in many industries. It helps systems understand images at a very detailed level, which makes it useful for tasks where accuracy matters.

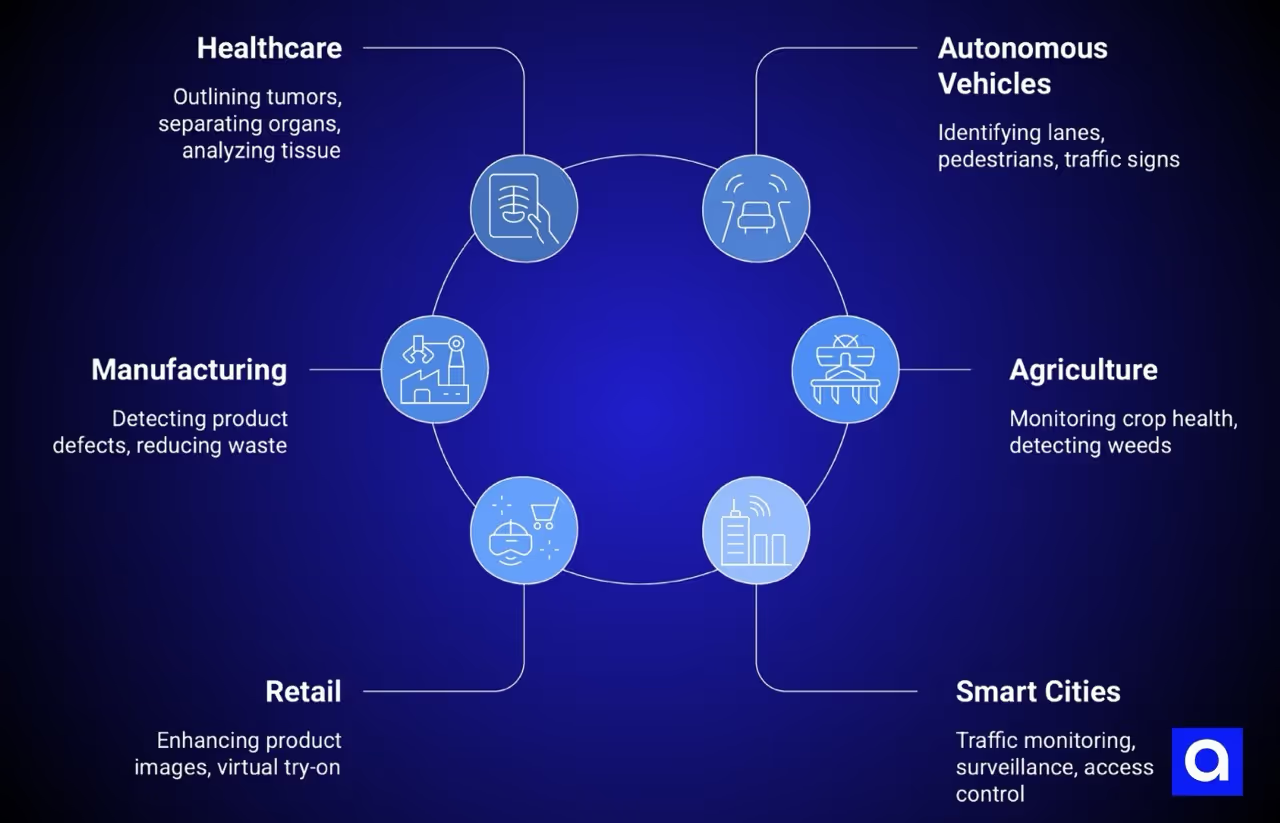

Healthcare and Medical Imaging

Healthcare is one of the most important areas for image segmentation. AI models can outline tumors in MRI and CT scans, separate organs for surgical planning, and analyze tissue in pathology images. In some cases, AI systems can detect lung nodules with accuracy close to that of experienced radiologists. The demand for these tools is growing quickly.

According to Valuates Reports, the semantic segmentation services market is expected to grow from $7.1 billion to $14.6 billion by 2030.

Autonomous Vehicles

Self-driving systems rely heavily on image segmentation to understand the road. These models identify lanes, road surfaces, pedestrians, cyclists, and traffic signs in real time. This detailed scene understanding helps vehicles make safe driving decisions. Edge devices such as NVIDIA Jetson allow these models to run fast enough for real-world driving conditions.

Manufacturing and Quality Control

Manufacturers use image segmentation to find product defects automatically. Instead of relying only on human inspectors, AI systems can detect cracks, surface damage, or shape problems with pixel-level accuracy. This helps companies catch issues earlier, reduce waste, and prevent faulty products from reaching customers.

Agriculture and Satellite Imaging

In agriculture, segmentation helps analyze images from drones and satellites. Farmers can monitor crop health, detect weeds, map flooding, and study land use. With this information, they can apply herbicides only where weeds appear instead of spraying entire fields. This approach saves money and reduces environmental impact.

Retail and E-Commerce

Retail companies use segmentation to improve product images and online shopping experiences. It can automatically remove backgrounds from product photos, support virtual try-on features, and power visual product search. These tools make online shopping easier and can improve conversion rates.

Smart Cities and Security

Cities and security systems use image segmentation for tasks such as traffic monitoring, surveillance analysis, and access control. The technology helps systems distinguish between people, vehicles, and other objects in busy urban environments. This supports better traffic management and improved public safety.

When combined with facial recognition, segmentation models can distinguish and track specific individuals within a crowd, enabling more precise identity verification in high-security environments.

Additional: Document Processing and Data Extraction

Image segmentation also plays a key role in automating document processing. Identifying and isolating specific fields within structured documents, such as names, addresses, and ID numbers on a driver's license, requires precise detection of text regions across varied layouts, fonts, and image quality conditions.

Azumo applied this approach in a recent project with CENTEGIX, a leading provider of school safety solutions. The team automated data extraction from driver's licenses for their visitor management system, using YOLO-based object detection to segment and locate fields across diverse document formats, combined with OCR for text extraction.

The proof of concept achieved over 80% accuracy in both field detection and text extraction, validating that AI-powered segmentation could meet operational requirements for real-time processing.

Traditional vs. Modern Image Segmentation Methods

Image segmentation has changed a lot over the years. Early systems followed fixed rules written by engineers. Today, most systems use deep learning models that learn from large datasets. This shift has made segmentation far more accurate and much better at handling real-world images.

Traditional Segmentation Methods

For many years, image segmentation depended on simple rules. Engineers designed these rules to separate parts of an image based on visible patterns.

Some common methods included thresholding, edge detection, region-based segmentation, and clustering algorithms such as k-means.

Thresholding separates pixels based on brightness or color. Edge detection looks for sudden changes in an image to find object boundaries. Region-based methods group pixels that look similar, while clustering algorithms place pixels into groups based on shared features.

These methods worked well in simple situations. But real-world images are rarely simple. Lighting changes, objects overlap, and scenes contain many details. In those cases, traditional approaches often struggled.

Early Deep Learning Breakthroughs

The rise of deep learning transformed computer vision. In 2012, AlexNet demonstrated that neural networks could outperform traditional methods on image recognition tasks.

A few years later, Fully Convolutional Networks (FCNs) introduced a new approach designed specifically for semantic segmentation. These models used only convolutional layers and significantly improved performance on common benchmarks such as Pascal VOC.

Modern Segmentation Models

Later architectures continued to push the field forward. In 2015, U-Net introduced an encoder-decoder design with skip connections. This architecture captures both detailed features and broader context, which makes it especially effective for medical imaging, where labeled data is limited.

The most significant architectural shift in recent years has been the rise of Vision Transformers (ViTs). Introduced in 2020, ViTs process images as sequences of patches rather than through convolutional filters, using self-attention mechanisms to model relationships between different parts of an image, even when they are far apart.

This design allows ViTs to capture global context more effectively than CNNs, which is why they now serve as the backbone for today's most powerful segmentation foundation models.

Unlike older CNN-based architectures that were typically trained for a single task, ViT-based foundation models are highly adaptable. They support continuous learning, can be fine-tuned with lightweight adapters for domain-specific applications, and scale to billions of parameters.

This foundation model approach is what makes modern segmentation so much more flexible and powerful than earlier deep learning methods.

Another major step came with the release of the Segment Anything Model (SAM) series. SAM 1 (2023) introduced interactive segmentation with visual prompts, and SAM 2 (2024) extended this to video tracking.

Then in November 2025, Meta released SAM 3, the most substantial update yet. SAM 3 is a unified model that can detect, segment, and track objects in both images and video using text prompts, image exemplars, or visual clicks.

Unlike its predecessors, which required a human to click on or box an object, SAM 3 accepts open-vocabulary text prompts: you can simply type a short phrase like 'yellow school bus,' and it will find and mask every matching instance in the scene.

Built on a ViT-based architecture with 848 million parameters, SAM 3 doubles the accuracy of previous systems on concept-level segmentation tasks, and its dataset includes over 4 million unique concept labels.

This represents a shift from geometry-focused segmentation to concept-level visual understanding, a capability Azumo leverages in its computer vision workflows.

Overall, image segmentation has moved from simple rule-based methods to powerful AI-driven systems that are faster, more flexible, and much more accurate.

What Are The Image Segmentation Models?

There are many image segmentation models, and the right one depends on what you are trying to build. The choice usually comes down to three things. First, the type of problem you want to solve. Second, how much labeled data you have. Third, how much computing power you can use.

Because of this, there is no single model that works best for every situation. Some models perform well with small datasets. Others need large amounts of training data but deliver higher accuracy. The table below shows several well-known segmentation models and where they are commonly used.

U-Net

U-Net is one of the most widely used models for semantic segmentation. It has an encoder that captures image features and a decoder that rebuilds the image while predicting the segmentation mask. Skip connections help the model keep fine details. Because it performs well even with smaller datasets, the U-Net is widely used in medical imaging.

Mask R-CNN

Mask R-CNN is a leading model for instance segmentation. It detects objects in an image and then creates a pixel-level mask for each one. This allows the system to show both the location and the exact shape of every object. It is often used in tasks such as object counting, robotic picking, and surgical tool tracking.

SAM 3 (Meta)

SAM 3, released in November 2025, is Meta's latest foundation model for segmentation. It detects, segments, and tracks objects across images and video using text prompts, image exemplars, or interactive visual prompts like clicks and boxes.

Its architecture consists of a DETR-based image-level detector and a memory-based video tracker sharing a single ViT backbone. SAM 3 was trained on the SA-Co dataset, which contains over 4 million unique concept labels. It doubles the accuracy of previous systems on promptable concept segmentation benchmarks.

Because it supports open-vocabulary text prompts, teams can segment objects by simply describing them in natural language. This makes it ideal for rapid prototyping, auto-labeling large datasets, and multi-domain applications where retraining is impractical.

At Azumo, SAM 3 represents the kind of modern foundation model we fine-tune and deploy for clients across healthcare, manufacturing, and document processing

DeepLabV3+

DeepLabV3+ is designed for high-accuracy semantic segmentation in complex scenes. It uses atrous convolution to capture information at different scales without losing resolution. This allows the model to understand both small details and larger image context. It is commonly used for natural scene analysis and outdoor environments.

HRNet

HRNet focuses on maintaining high-resolution features throughout the network. Instead of reducing image resolution and restoring it later, the model keeps multiple resolution streams active at the same time. This approach improves spatial precision. HRNet is often used when accurate boundaries and fine visual details are important.

OMG-Seg

OMG-Seg is a newer architecture designed to handle multiple segmentation tasks within a single system. It can perform several vision tasks at once, including segmentation and scene understanding. This makes it useful in environments where models need to process different types of visual data together. The trade-off is higher computational demand.

How Azumo Uses AI Image Segmentation for Visual Data Processing

Since 2016, Azumo has delivered AI development services across the full machine learning lifecycle, including computer vision solutions that move from data assessment and model selection all the way through training, evaluation, and production deployment.

For teams building more autonomous pipelines, pairing segmentation models with the right AI agent development tools can enable end-to-end visual workflows that detect, classify, and act on image data without human intervention.

Building Precise Segmentation Models

Azumo's segmentation models can find each object in an image and mark its exact shape. They work even when there are many objects of the same type in one picture.

This level of detail is useful for counting products on shelves, detecting defects on production lines, marking tissue in medical scans, and interpreting complex technical documents such as floor plans and construction blueprints.

For example, at Azumo, we’ve once fine-tuned a ViT-based segmentation model to automatically detect rooms, doors, windows, and walls in architectural blueprints. Using approximately 216 images with around 4,000 annotations, the model could identify and outline building features with enough accuracy to calculate square footage for each detected room.

We used post-processing algorithms to simplify output polygons and produce cleaner, more accurate results.

This project shows that teams can fine-tune modern foundation models like SAM 3 in one to two days using small, well-annotated datasets to solve specialized segmentation tasks. To reach production-level accuracy, they need to expand the dataset to around 1,500 to 5,000 images and further refine the post-processing pipeline.

Running Models on Edge and Cloud

Azumo makes sure the models can run where they are needed. For tasks that need fast processing on site, like factory cameras or mobile tools, the models are set up to run on edge devices. For tasks that need more computing power, they run on cloud platforms such as AWS, Azure, or Google Cloud.

In all cases, the systems keep learning and improving over time. The team uses structured training and performance checks to make sure the models stay accurate and reliable.

Applications and Compliance

Azumo uses image segmentation in many industries. In healthcare, it helps with medical scans and diagnostics. In manufacturing, it finds defects and improves quality. In e-commerce, it supports inventory checks and visual search. In fintech, it helps process and verify documents.

The technology can be added to existing software through REST APIs. Azumo is SOC 2 certified and follows HIPAA, GDPR, and SOX rules. This makes it safe for industries that need high security. Every project follows a clear path from testing the idea to building, improving, and maintaining the system.

We also offer a free consultation. Teams can use this to see if their computer vision project is possible and decide the best approach before building a full system.

.avif)