.avif)

The global image recognition market reached $68.46 billion in 2026 and may reach $163.75 billion by 2032.

At the center of that growth sits image classification, the basic computer vision task that teaches AI to look at a photo and answer one question: "What is this?" From spotting tumors on a chest X-ray to sorting bad parts on a factory floor, it can be the starting point for almost every visual AI tool you can think of.

This article breaks down how image classification works, which models matter in 2026, where companies are using it, and how our team at Azumo builds and rolls out these systems for clients in healthcare, manufacturing, retail, and more.

What Is Image Classification and How Does It Work?

Image classification is a computer vision task where an AI model looks at an image and gives it one or more set labels based on what it sees. For example, you glance at a photo of a golden retriever and right away know it is a dog (your brain does that in a split second).

For a machine, that same task needs layers of math, millions of training examples, and a lot of computing power.

We can say that image classification serves as the starting point for more advanced tasks like object detection and image segmentation. The model answers "what" but not "where" in the image that thing is.

Modern AI image classification uses deep learning. Convolutional Neural Networks (CNNs) have been the backbone of image classification for over a decade and remain important for many production systems.

However, the field has shifted significantly toward Vision Transformer (ViT) architectures, which process images as sequences of patches using attention mechanisms rather than convolutional filters.

ViTs allow for highly complex, adaptable foundation models that handle larger-scale, continuous learning much better than older architectures.

Today's most powerful classification models, including Meta's DINOv3, are built on ViT backbones and can learn universal visual features without any labeled data, then be adapted to specific tasks with minimal effort.

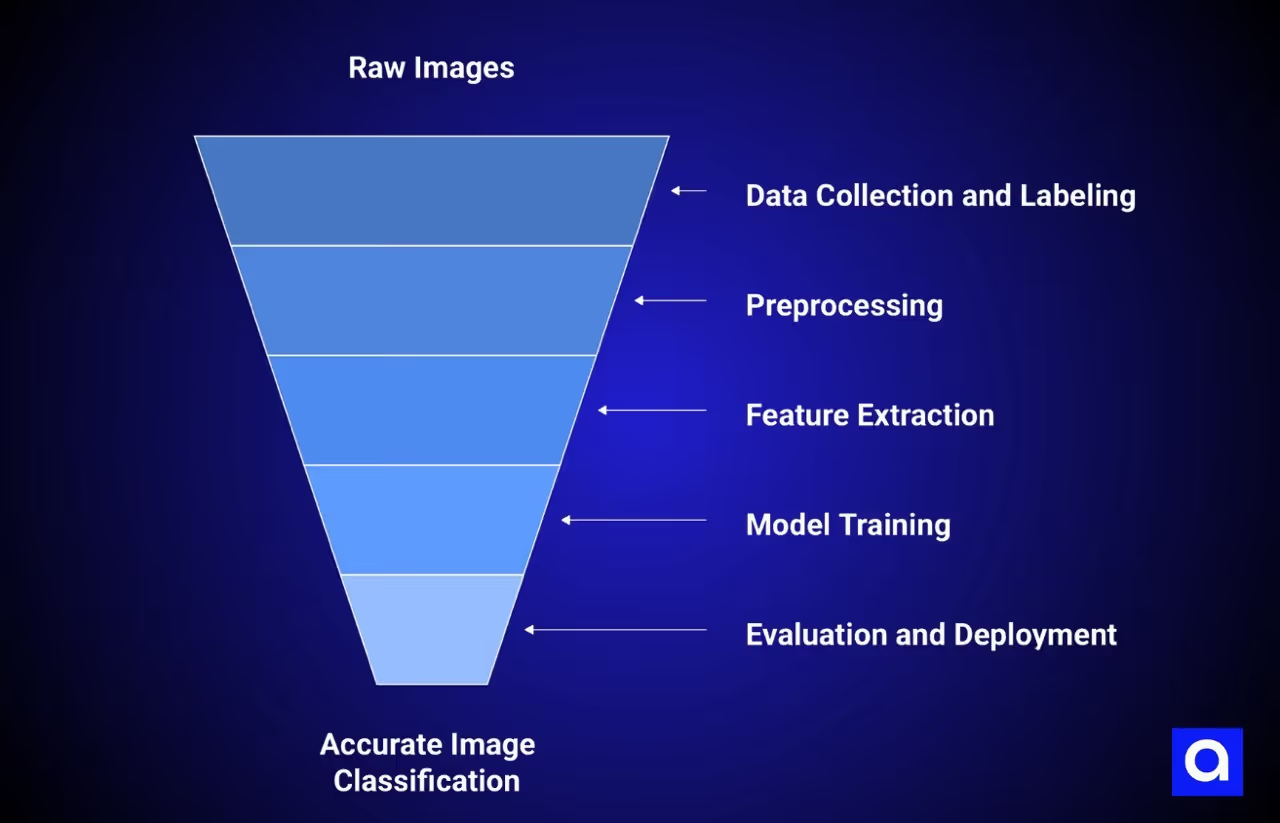

Here is how the process works:

- Data collection and labeling. You gather thousands or millions of images and tag each one with the right label.

- Preprocessing. Images are resized and often changed with random flips, rotations, or color shifts. This step can help stop the model from memorizing specific training images instead of learning general patterns.

- Feature extraction. CNN Layers or Vision Transformers (ViT) scan the image and learn patterns at different levels, from edges and textures in early layers to shapes and whole objects deeper in. The model learns straight from raw pixel data, so there is no need to build features by hand.

- Model training. The model adjusts its settings to reduce prediction errors, most often through supervised learning where labeled examples guide the process.

- Evaluation and deployment. You test the model on images it has never seen, measure accuracy, and put it into use where it can sort new images in real time.

For certain tasks, AI image classification can already beat humans. Face recognition is one area where AI can consistently do better than people, though for narrow, well-defined tasks in general, these models can perform very well.

What Are The Types of Image Classification?

Depending on the problem, the data, and the tools available, there are several different approaches to choose from.

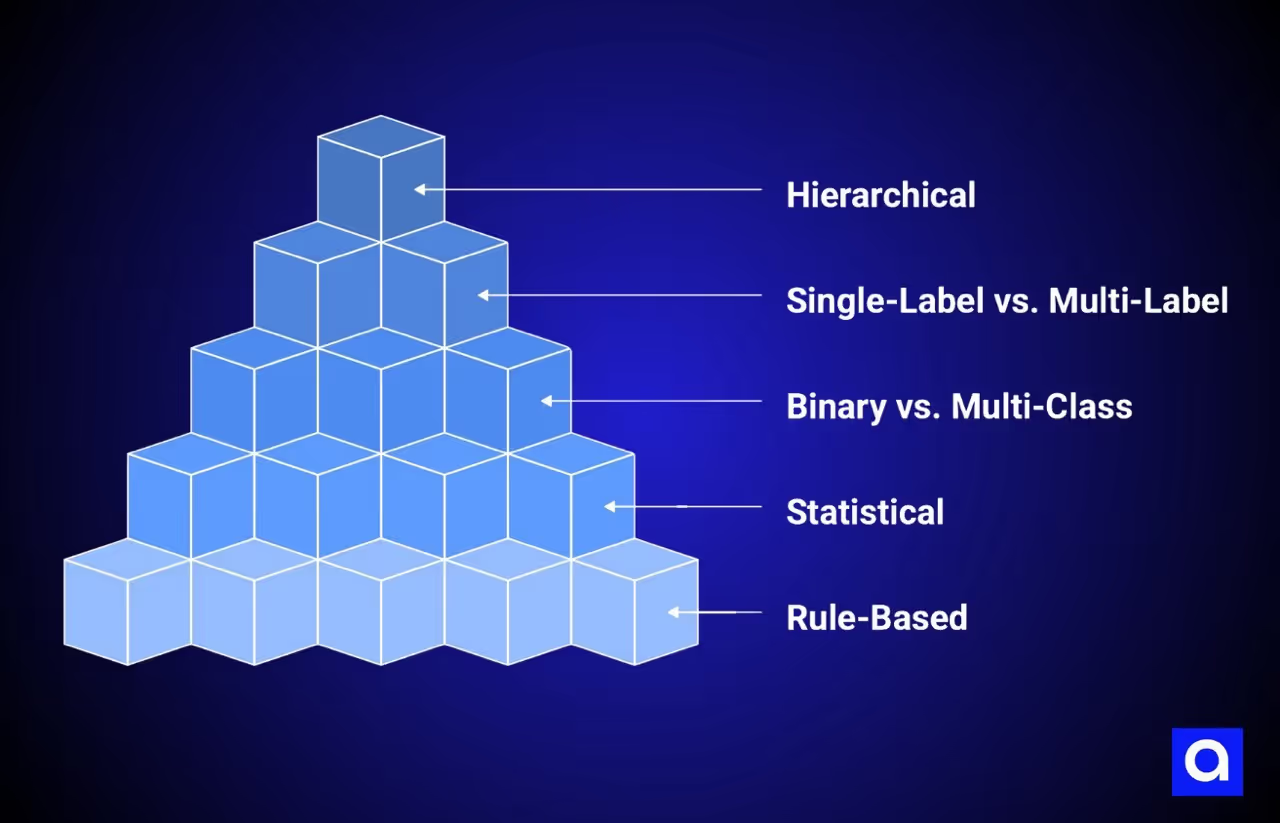

Rule-Based Image Classification

Engineers write set rules that tell the system how to sort images, for example, "if more than 60% of pixels are green, label this as vegetation." No learning happens here. The system only knows what a human has told it, which means it breaks down fast when images get messy, lighting changes, or new groups appear. Upkeep becomes a problem, and the system never gets better on its own.

Statistical Image Classification

Statistical methods use probability models to sort images and split them into two types: distribution-based and distribution-free. Satellite image analysis relied on these for decades, and some are still used today for land mapping.

Distribution-based methods assume your data follows a known pattern, usually a bell curve. The classic example is Maximum Likelihood Classification (MLC), which assigns each pixel to the group with the highest probability. When the data fits that pattern, MLC can work well, but real-world noise can cause accuracy to drop.

Distribution-free methods skip those assumptions and look at the data as it is. Common examples include K-Nearest Neighbors (KNN), which sorts an image based on similarity to nearby training examples, and Support Vector Machines (SVM), which find the best boundary between groups. SVMs can be especially good when the data is complex and the distribution shape is unknown.

Binary vs. Multi-Class Classification

Binary classification has two possible outputs: spam or not spam, tumor or no tumor. Multi-class classification opens up to three or more labels, asking "is this a cat, dog, bird, or horse?" Most real-world uses need multi-class models because the world rarely comes in a simple yes-or-no form.

Single-Label vs. Multi-Label Classification

Single-label classification gives each image exactly one tag. Multi-label classification allows several tags at once, so a vacation photo might get labeled "beach," "sunset," and "people" all together. This matters for social media tagging, product catalogs, and medical imaging, where a single scan might show more than one condition.

Hierarchical Classification

Here, groups sit in a tree structure from broad to specific: Animal → Mammal → Dog → Labrador Retriever. If the model is not sure enough to call it a Labrador, it can still output "Dog" and give useful information. This works well for large-scale systems like e-commerce catalogs with thousands of items, giving the model a way to fall back to a safer answer rather than forcing a wrong, specific one.

What Are Image Classification Models and Algorithms?

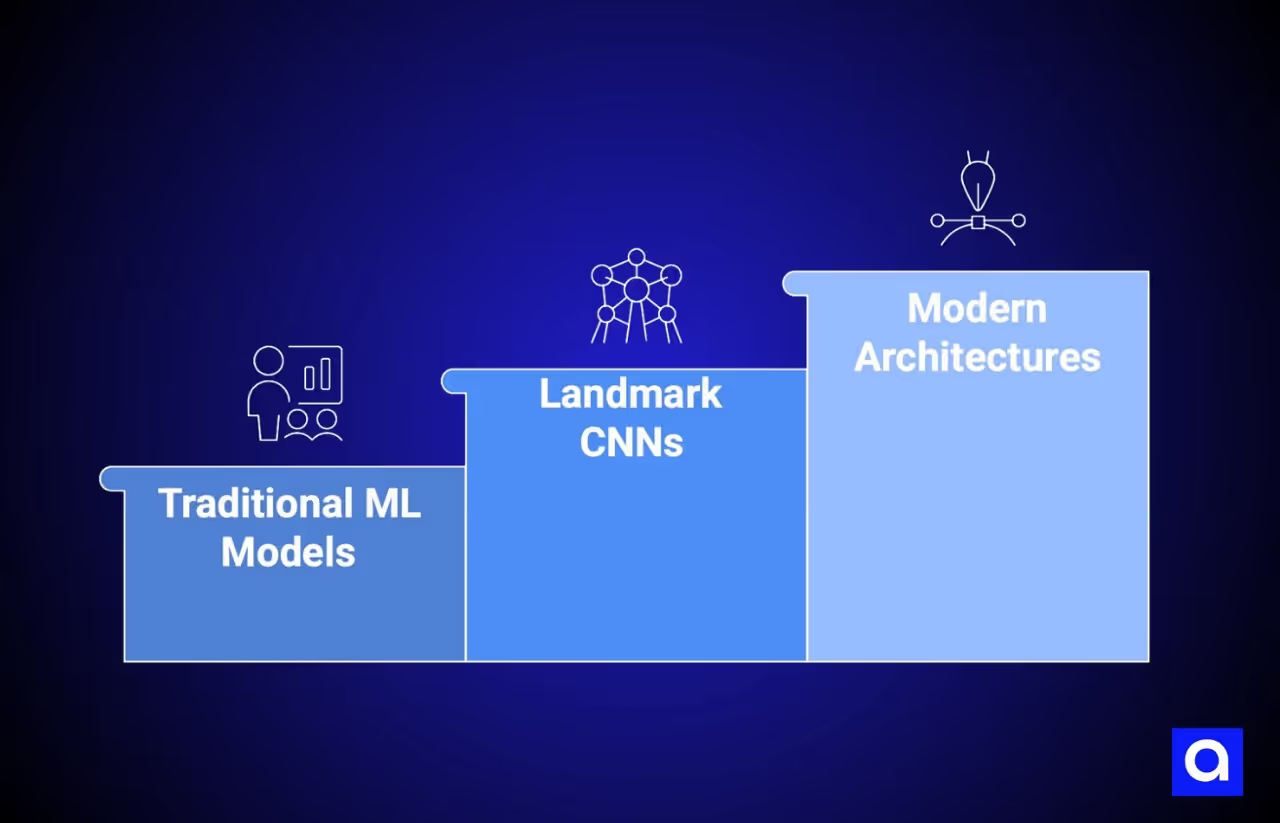

Traditional ML Models

Before deep learning took over, teams used K-Nearest Neighbors (KNN), Support Vector Machines (SVM), and Random Forests. These can still work for small datasets. They train faster and need less computing power.

Landmark CNN Architectures

AlexNet (2012) cut error rates sharply in the ImageNet competition and marked a turning point for deep learning in vision. GoogLeNet/Inception followed with a design that runs multiple filter paths at once, making the network deeper without increasing the number of settings too much. ResNet added shortcut connections that let data flow through very deep networks, solving a common training problem and making it practical to build networks with hundreds of layers.

Modern Architectures

Vision Transformers (ViT) brought a model type originally built for text into the image space. Instead of scanning pixels with filters, ViT splits an image into patches and processes them using attention mechanisms.

This architectural shift is significant because ViTs allow for highly complex, adaptable foundation models that handle larger-scale, continuous learning much better than older CNN-based architectures.

Where CNNs capture local patterns through convolutional filters, ViTs use self-attention to model relationships across the entire image, which is why they now serve as the backbone for the most powerful classification models available.

Swin Transformer takes the ViT approach further with layered, shifted-window processing, making it strong for detection and grouping tasks alongside classification.

Meta's DINOv3, released in August 2025, represents the current state of the art in self-supervised visual feature learning. DINOv3 is a Vision Transformer trained on 1.7 billion images with a 7-billion-parameter teacher model, using a teacher-student distillation approach that requires no labeled data at all.

It introduces techniques like Gram anchoring to maintain the quality of both global features (used for classification) and local patch-level features (used for segmentation and detection) during long training runs, a problem that plagued earlier models.

DINOv3's frozen features achieve state-of-the-art performance on image classification benchmarks like ImageNet, and its embeddings can be paired with a simple linear classifier to produce highly competitive results without any fine-tuning.

Meta has released DINOv3 in multiple sizes, from 21 million to 7 billion parameters, under a commercial license, making it practical for both resource-constrained edge deployments and large-scale cloud applications.

At Azumo, DINOv3 is part of our modern classification toolkit, selected when clients need strong visual features without the overhead of building and maintaining large labeled datasets.

The YOLO family has also set new benchmarks for speed and accuracy in recent years, making real-time classification and detection more accessible for production environments.

What Are The Key Concepts to Know?

Transfer learning lets you take a model already trained on millions of images and adapt it to your specific task, reducing both the data and time you need.

Modern foundation models like DINOv3 take this even further: their frozen features are so powerful that you can often skip fine-tuning entirely, using a simple linear classifier on top of the pretrained backbone to get competitive classification results.

Data augmentation increases training diversity by applying random transformations to existing images, which can help prevent overfitting. AutoML platforms handle model selection and tuning automatically, making image classification more accessible to teams without deep machine learning expertise.

Image Classification vs. Object Detection vs. Image Segmentation

These three tasks are often mixed up. Here is a simple comparison:

So, classification assigns a label to the whole image, but cannot tell you where the object sits. Object detection adds location by drawing boxes around each identified object. Segmentation goes further still, assigning a class to every single pixel, with two sub-types: semantic (all pixels of a class share one label) and instance (each individual object gets its own label).

Complex systems can use all three together. Self-driving cars are a good example: segmentation identifies drivable road surfaces, detection finds pedestrians and vehicles, and classification reads road signs. The trade-off is clear: more detail means more computing cost and more labeled training data.

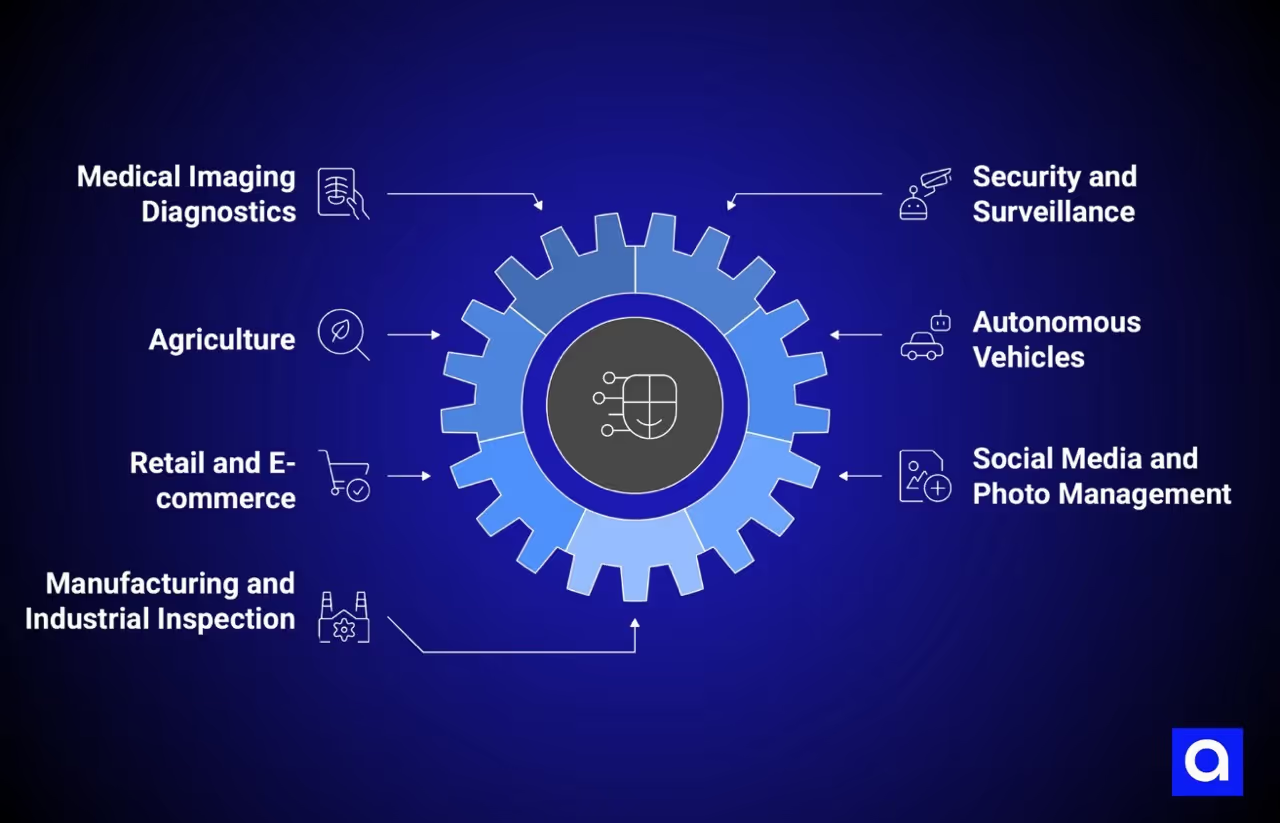

What Are the Most Common Real-World Image Classification Use Cases?

Medical Imaging Diagnostics

AI classification models can look at X-rays, MRIs, and CT scans to find tumors, pneumonia, and eye disease. AI diagnostic tools may now exceed 95% accuracy in areas like lung cancer detection and retinal disease screening. One CNN reached 93.06% accuracy sorting lung cancer types from CT scans, and a study in Wiley iRADIOLOGY found that InceptionV3 hit 95.56% accuracy for diabetic eye disease detection using just 3,662 training images. The benefits can include earlier detection, a lighter workload for doctors, and consistent second opinions.

Security and Surveillance

Modern surveillance systems can spot unusual activity, recognize faces, and track objects in real time. Facial recognition held roughly 23% of the image recognition market share in 2024, with uses ranging from access control and identity checks to perimeter security and crowd monitoring. Classification tools can flag suspicious activity automatically, removing the need for someone to watch every camera feed all day.

Agriculture

AI models can detect plant diseases from leaf images, with one model able to sort 13 different diseases with high accuracy. Satellite and drone image classification can support crop health monitoring and yield prediction at scale, giving farmers a chance to step in before disease spreads. Our computer vision services include scene analysis that can support this kind of agricultural monitoring.

Autonomous Vehicles

Self-driving systems can use image classification to identify road signs, traffic lights, people, and other vehicles. Classification works alongside detection and segmentation to give the vehicle a full picture of its surroundings. And this way, on-device AI can make real-time, low-delay responses possible.

Retail and E-commerce

AI-powered recommendation systems may increase revenues by up to 15%, with personalized suggestions accounting for up to 30% of e-commerce site revenues. According to Microsoft Azure, Goodwill used Azure Vision to pull item details from photos, boosting clothing sales by over 35%. Visual search adds another layer, letting shoppers upload a photo to find similar products right away.

Social Media and Photo Management

Since Google Photos launched in 2015, platforms have used classification to auto-tag, organize, and surface content based on what is in the image, as Google's ML Practicum documents. Content moderation at scale, spotting harmful or copyrighted images across billions of uploads, relies on the same foundations.

Manufacturing and Industrial Inspection

Siemens reported a 90% drop in false positives and a 50% increase in defect detection accuracy after adding AI-based image classification to electronics manufacturing. Cameras on assembly lines feed images into classification models that sort products as good or defective, catching color issues, tears, and breaks that human inspectors might miss.

Document Processing and Data Extraction

Image classification also plays a growing role in automated document processing. Classifying field types on structured documents, such as distinguishing a name field from a date field or an address field on a driver's license, requires models that can generalize across varied layouts, fonts, and image quality conditions.

We applied this in a project with CENTEGIX, a leading provider of school safety solutions. The team automated data extraction from driver's licenses for their visitor management system, using YOLO-based object detection to classify and locate fields across diverse document formats from different states, combined with OCR engines for text extraction.

Multiple model variants were tested to find the right balance between classification accuracy and processing speed for real-time use.

The proof of concept achieved over 80% accuracy in both field detection and text extraction, validating that AI-powered classification could meet operational requirements.

How Azumo Applies Image Classification to Solve Real-World Challenges

At Azumo, we build computer vision solutions using both established CNN architectures and modern Vision Transformer-based foundation models like DINOv3. We select the right architecture for each use case: CNNs for lightweight, speed-critical edge deployments, and ViT-based models like DINOv3 when clients need strong visual features that generalize across domains without large labeled datasets.

Our systems handle large volumes of visual data in live environments, deployed at the device level for fast, low-latency use and in the cloud for heavy workloads, with tools that keep models improving over time.

Our computer vision work can deliver:

- Real-time object detection and recognition, from spotting products on store shelves to watching traffic patterns

- Image classification and tagging for content libraries, medical records, and factory lines

- Facial recognition and biometrics for secure sign-in and access control

- Scene analysis for farming, satellite imagery, and environmental monitoring

- OCR and text extraction from documents, receipts, and forms

- ID verification through computer vision-powered identity checks

- Visual search and defect detection for e-commerce and quality control

We work across fintech, healthcare, manufacturing, retail and e-commerce, media and entertainment, and oil and gas. Our AI development services cover custom model training, LLM application development, NLP, and AI agent development alongside our computer vision work. Azumo is SOC 2 certified and experienced with HIPAA, GDPR, and SOX requirements, so our AI solutions are built with security and compliance from the start.

If you are thinking about adding image classification to your product or workflow, we would love to talk. Get in touch and let's look at what a custom computer vision solution could look like for your team.

.avif)