.avif)

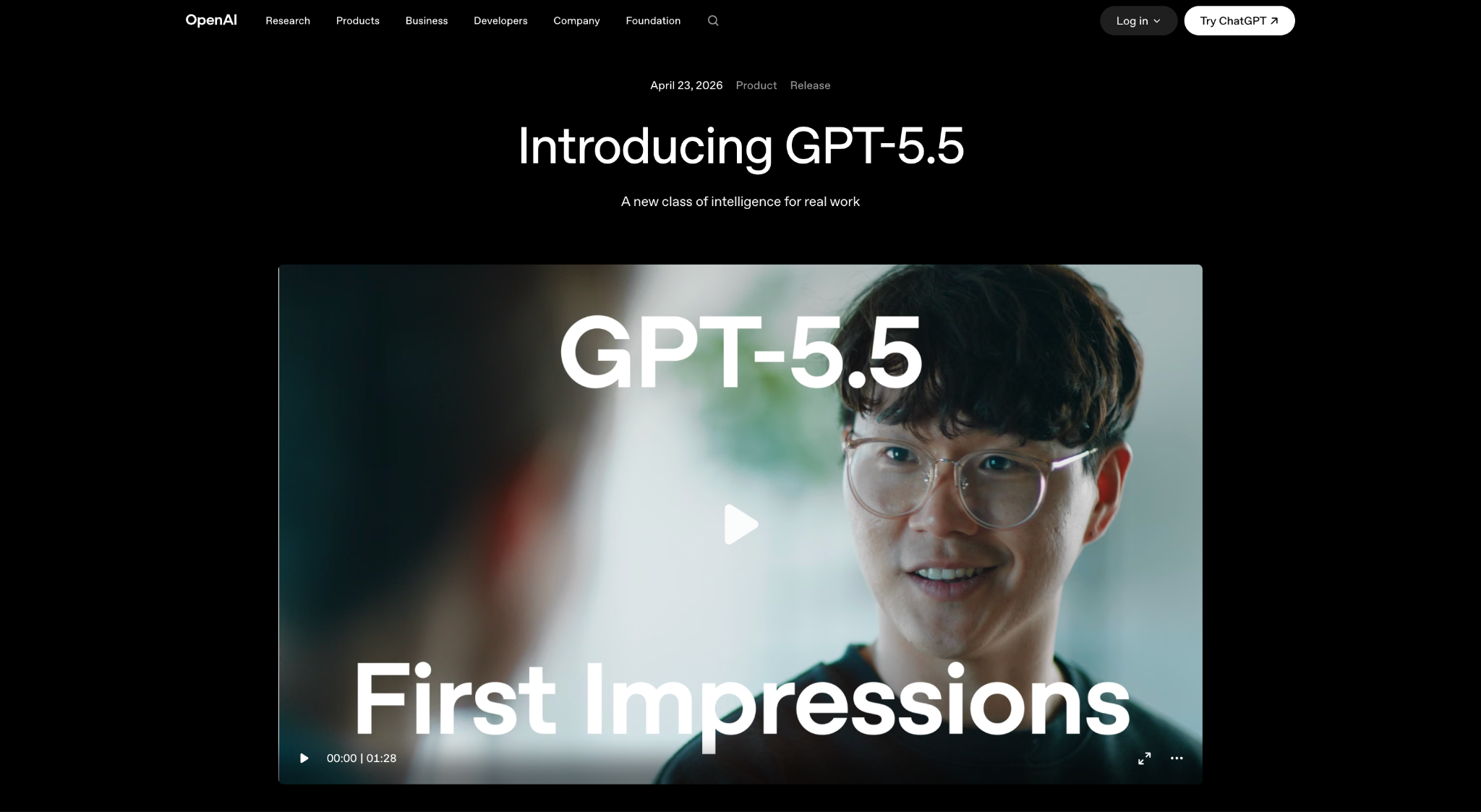

The LLM market has changed quickly in 2026. Since earlier rankings, OpenAI has introduced GPT-5.5, Anthropic has released Claude Opus 4.7, Google has launched Gemini 3.5 Flash, xAI has moved API traffic toward Grok 4.3, and Mistral has expanded its lineup with Mistral Medium 3.5 and Mistral Large 3. These updates matter because rankings based on older versions may no longer reflect current coding, reasoning, pricing, or enterprise deployment performance.

For private benchmarking against your own data, see our enterprise LLM evaluation services.

Key highlights include:

- Long-context analysis: GPT-5.5, Claude Opus 4.7, Grok 4.3, Llama 4 Scout, and Llama 4 Maverick should be compared for document-heavy workflows, codebase review, research, and enterprise knowledge systems.

- Advanced reasoning: GPT-5.5 and Claude Opus 4.7 are the main frontier models to compare for complex professional work, coding, research, and multi-step reasoning.

- Coding and agent workflows: Claude Opus 4.7, GPT-5.5, Qwen3-Coder, and Mistral Medium 3.5 are strong candidates for software engineering and agentic development tasks.

- Real-time and tool-based workflows: Grok 4.3 is a stronger current replacement for Grok 4.1, with a 1M context window and agentic tool-calling focus.

- Open-weight options: Llama 4 Scout, Llama 4 Maverick, Mistral Large 3, Qwen3-Coder, and GLM-4.7 help teams that need more deployment control, lower vendor dependency, or self-hosting flexibility.

The analysis compares current LLM pricing, access models, context windows, benchmark relevance, open-source availability, and deployment flexibility. Instead of treating one model as the universal winner, this guide helps you compare the best LLMs for advanced reasoning, coding, real-time research, long-context analysis, cost-sensitive workflows, and enterprise AI development.

Current Market Leaders and Performance Benchmarks

Reasoning and Intelligence Champions

Gemini 3.5 Flash is the Gemini model to cover for current reasoning and intelligence comparisons. It is Google’s latest Gemini 3.5 release and is positioned for agentic execution, coding, multimodal work, and complex long-horizon tasks. Google says Gemini 3.5 Flash delivers frontier performance for agents and coding, and its developer documentation lists it as generally available with a 1 million token context window.

With its large context window and stronger agentic capabilities, Gemini 3.5 Flash is especially relevant for workflows that require sustained reasoning across long documents, codebases, structured instructions, and multimodal inputs. Gemini 3 Pro was a stronger fit for the earlier benchmark snapshot, but Gemini 3.5 Flash is now the more relevant Gemini model for this section because the article is targeting current queries like “best LLM right now,” “smartest LLM,” and “current best AI model.”

Grok 4.3 is now the stronger xAI model to cover reasoning, tool use, and current-information workflows. It supports configurable reasoning, a 1 million token context window, and agentic tool calling, making it a better fit than Grok 4.1 for multi-step tasks that require live research, long-context analysis, or external tool use. xAI lists Grok 4.3 with $1.25 input and $2.50 output pricing per 1 million tokens.

Its main advantage is not only raw reasoning performance, but its connection to real-time workflows. For applications that need current information, market updates, social trend analysis, or tool-based research, Grok 4.3 is more relevant than older Grok 4.1 references. Grok 4.1 can be mentioned only as an earlier benchmark result, not as the current Grok recommendation.

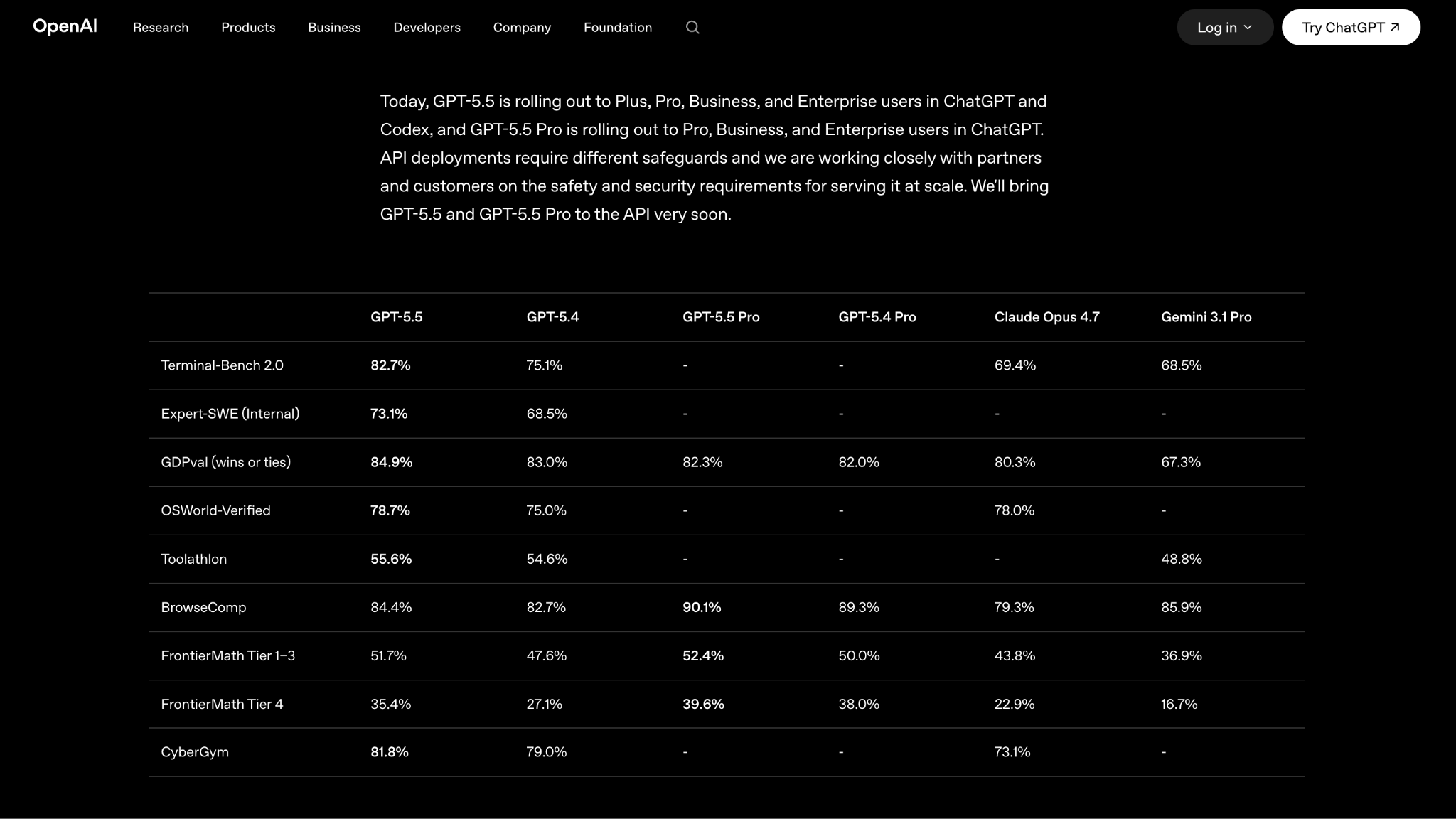

Claude Opus 4.7 is the Claude model to cover current reasoning, coding, and agent workflows. It is Anthropic’s latest flagship model and is designed for complex software engineering, structured reasoning, long-running tasks, and high-accuracy knowledge work. Compared with older Opus 4.5 references, Opus 4.7 is a stronger fit for a current 2026 LLM ranking because it reflects Anthropic’s latest model improvements.

Claude Opus 4.7 is especially relevant for developers and enterprise teams that need depth over speed. It can support complex debugging, codebase analysis, multi-step planning, document reasoning, and agentic workflows where accuracy and instruction-following matter more than low-cost output.

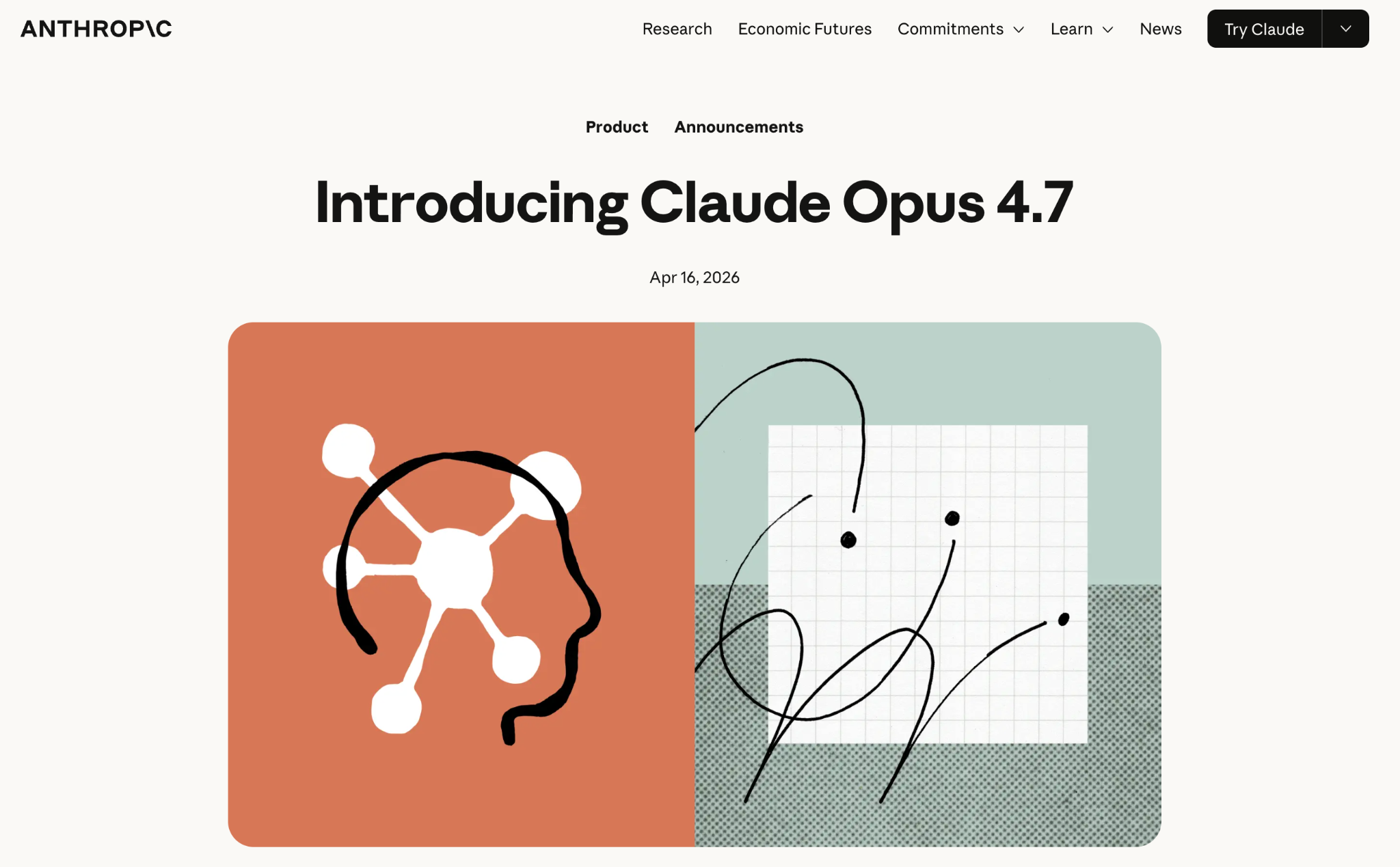

GPT-5.5 is OpenAI’s current frontier model for coding and professional work, with strong performance across complex reasoning, research, software development, data analysis, and enterprise workflows. OpenAI lists GPT-5.5 with a 1,050,000 token context window, reasoning token support, and standard API pricing of $5 input and $30 output per 1 million tokens.

For teams comparing the best LLMs in 2026, GPT-5.5 is a more accurate OpenAI recommendation than GPT-5.1. GPT-5.1 can be mentioned only as an earlier version or historical benchmark reference, but it should not be presented as the current OpenAI option for reasoning, mathematics, multimodal work, or enterprise applications.

What Is The Difference Between LM Arena vs. Traditional Benchmarks?

LM Arena rankings are based on blind human preference comparisons, where users choose the stronger response between two anonymous models. This makes LM Arena useful for understanding how models perform in broad, real-world interactions such as writing, reasoning, coding, and general problem-solving.

Traditional benchmarks, such as GPQA Diamond, SWE-bench, AIME, and MMLU, test specific capabilities in more controlled conditions. These benchmarks help evaluate technical strengths like scientific reasoning, math performance, software engineering, instruction following, and task completion accuracy.

Both methods are valuable, but neither should be used alone. LM Arena can show which models people prefer in everyday use, while traditional benchmarks reveal how models perform on defined tasks. For a current LLM ranking, the best approach is to compare benchmark results alongside pricing, context window, access type, deployment flexibility, latency, and the specific use case.

Coding Excellence and Developer Tools

Claude Opus 4.7 is the Claude model to cover current coding and software engineering workflows. It is designed for complex codebase analysis, debugging, multi-step implementation, architectural planning, and agentic development tasks. Compared with older Claude Opus 4.5 references, Opus 4.7 is a stronger fit for a current 2026 ranking because it reflects Anthropic’s latest model improvements for advanced software work.

GPT-5.5 is also a leading option for coding, especially for teams already building with OpenAI’s ecosystem. It is well-suited for software development, data analysis, technical research, and enterprise workflows that require strong reasoning across long inputs. For open or more flexible coding workflows, Qwen3-Coder and Mistral Medium 3.5 are worth including because they give developers stronger deployment and cost-control options.

Months ago, GPT-5.2-high was a strong OpenAI option for coding and complex professional workflows, but GPT-5.5 is now the more current model to cover in this section. It is OpenAI’s current frontier model for coding, reasoning, research, and long-context technical work. With a 1,050,000 token context window, reasoning token support, structured outputs, function calling, and image input, GPT-5.5 is better aligned with advanced software development tasks than older GPT-5.2 references.

For teams that need maximum accuracy, GPT-5.5 Pro can be mentioned as the premium option. It is priced higher than GPT-5.5, at $30 input and $180 output per 1 million tokens, and is positioned for smarter, more precise responses. For lower-cost coding workloads, GPT-5.4 or GPT-5.4 mini may be more practical than GPT-5.5 Pro.

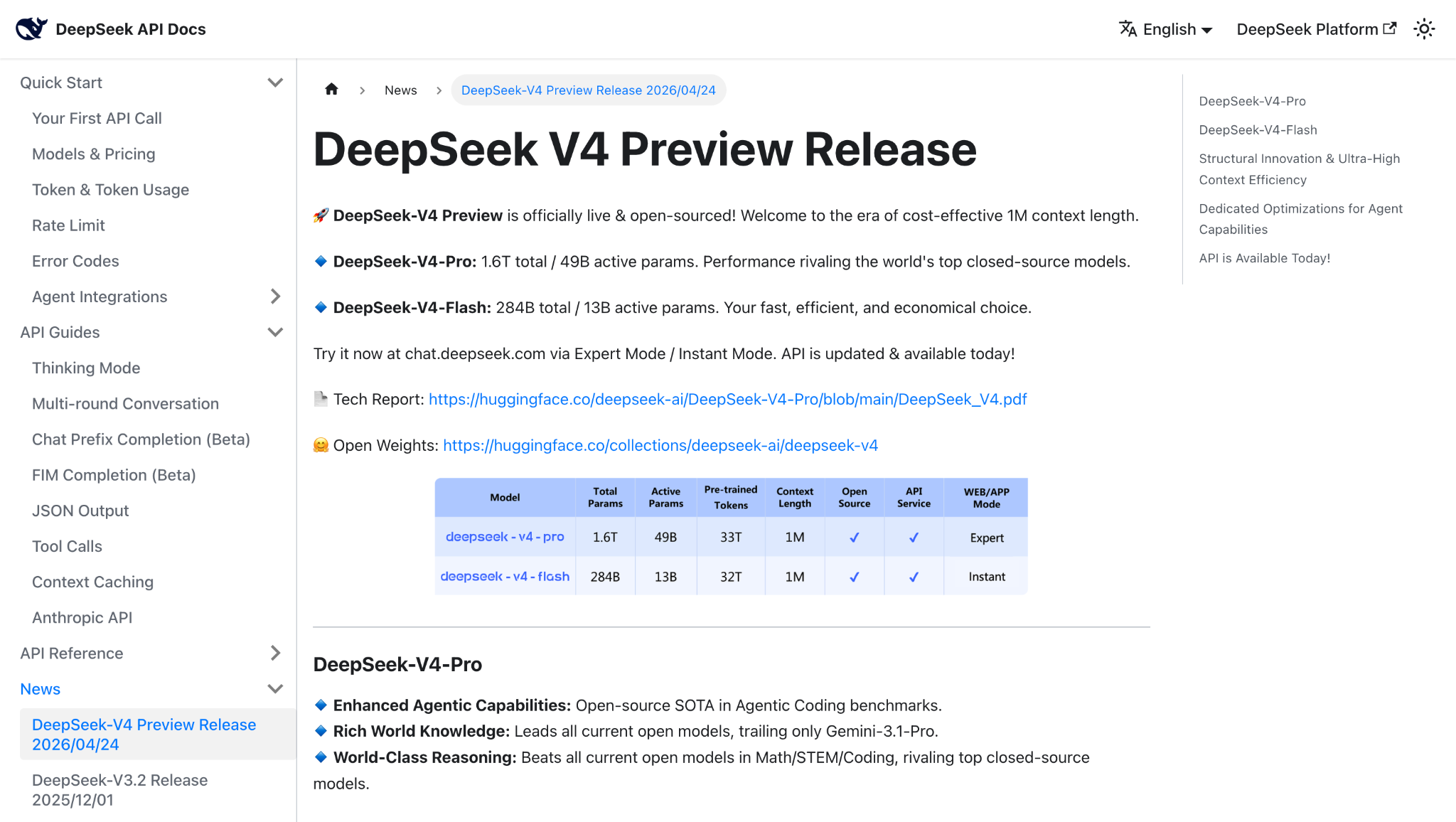

DeepSeek V4 Flash is the better DeepSeek model to cover for current cost-effective coding, reasoning, and high-volume AI workflows. It supports a 1 million token context window and is positioned as the lower-cost V4 option, with reasoning capabilities that closely approach V4 Pro on many tasks. DeepSeek’s API docs also note that the older deepseek-chat and deepseek-reasoner model names now correspond to the non-thinking and thinking modes of DeepSeek V4 Flash.

For teams that need stronger performance, DeepSeek V4 Pro can be mentioned as the higher-capability option. It is designed for advanced reasoning, coding, and long-horizon agent workflows, while V4 Flash is the more practical choice for cost-sensitive production use. DeepSeek R1-0528 can still be referenced as an earlier open-source reasoning model known for math, programming, and logic benchmarks, but it should not be the main DeepSeek recommendation in the current ranking.

Open-Source Coding Champions

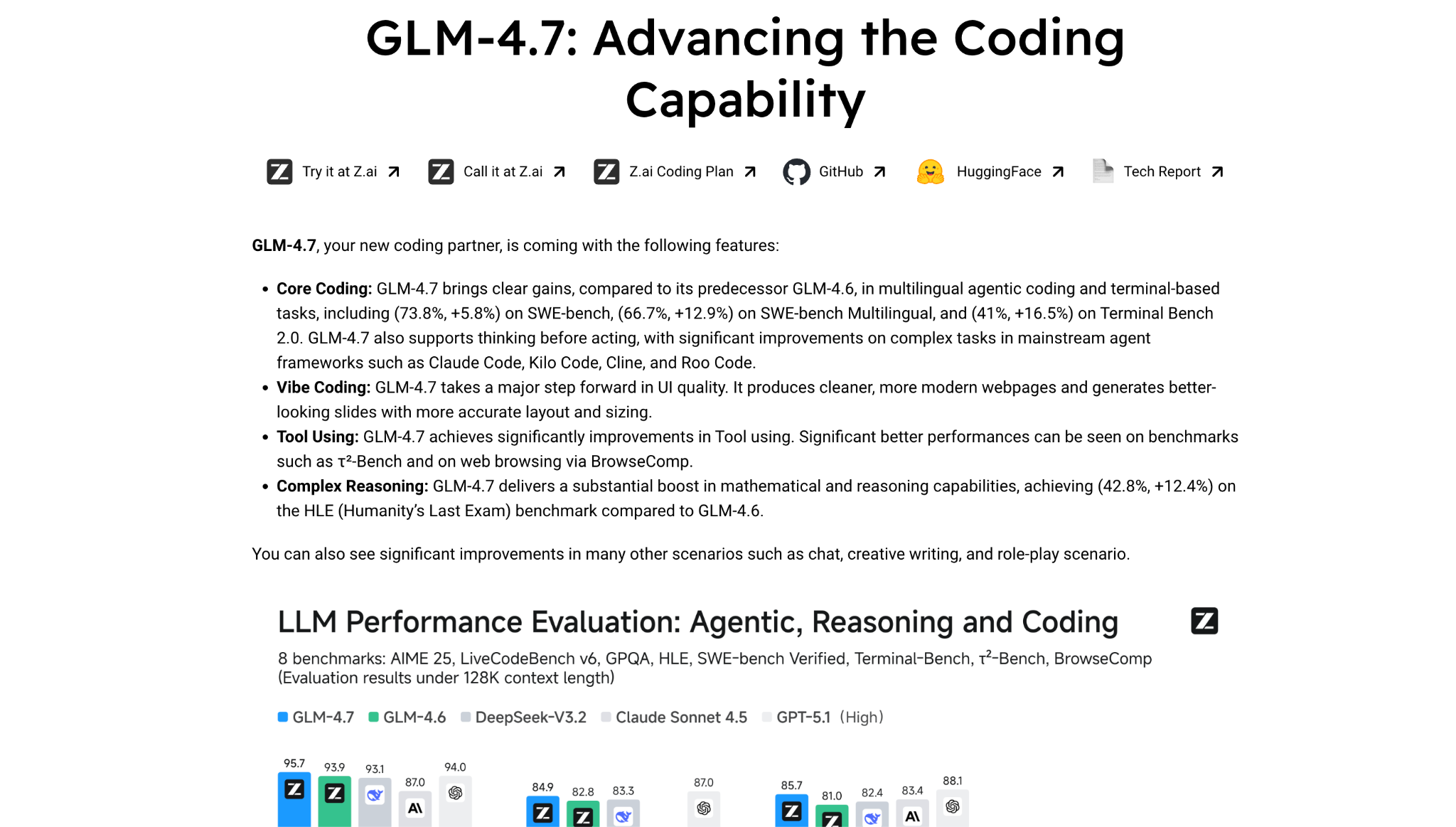

- Open-source and open-weight coding models have become serious options for teams that need more control over deployment, cost, and customization. Qwen3-Coder-480B-A35B-Instruct is a strong choice for agentic coding, tool use, browser use, and repository-level reasoning. GLM-4.7 remains relevant for multilingual coding, terminal-based tasks, and agent frameworks, while MiniMax should be updated to the latest available coding-focused version, such as MiniMax-M2.7, if it stays in the current ranking.

- These models may not replace frontier closed models for every enterprise coding workflow, but they give engineering teams more flexibility for self-hosting, experimentation, cost control, and specialized development environments.

Cost-Effectiveness and Accessibility Analysis

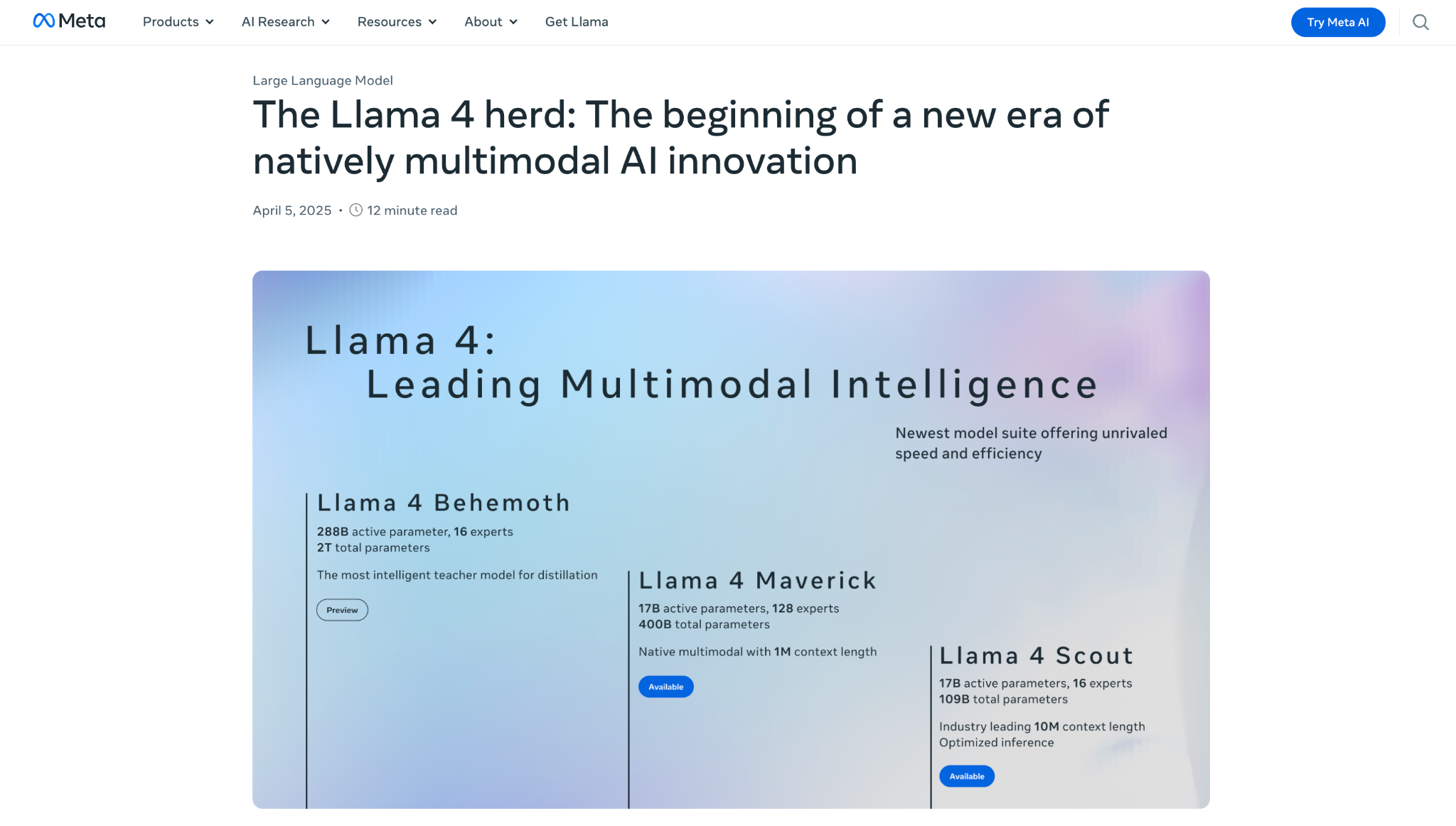

Meta’s Llama 4 series remains one of the strongest open-weight options for teams that need more control over deployment, customization, and long-term infrastructure costs. The two main variants serve different needs: Llama 4 Scout is built for extreme long-context work, while Llama 4 Maverick offers a more balanced option for multimodal reasoning, coding, and enterprise AI applications.

Llama 4 Scout uses 17 billion active parameters with 16 experts and supports a 10 million token context window, making it useful for workflows that involve large document collections, long codebases, legal files, research archives, or enterprise knowledge systems. Meta says Scout can fit on a single NVIDIA H100 GPU with quantization, which makes it more accessible than many larger open-weight models for teams with the right infrastructure.

Llama 4 Maverick uses 17 billion active parameters with 128 experts and is designed as the more general-purpose option in the Llama 4 family. It is better suited for teams that want strong multimodal capabilities, coding and reasoning support, and flexible deployment without relying fully on a closed API provider. Meta positions Maverick as a high-performance-to-cost model and a stronger fit for broader production use than Scout when extreme context length is not the main requirement.

Mistral Medium 3.5 replaces Mistral Medium 3.1 as the stronger value-focused Mistral model for current comparisons. It is a dense 128B open-weight model with a 256k context window, built to handle instruction-following, reasoning, and coding in a single set of weights. Mistral positions it as a frontier-class multimodal model optimized for agentic and coding use cases, making it a practical option for teams that need strong performance without relying only on closed frontier models.

At $1.50 per million input tokens and $7.50 per million output tokens, Mistral Medium 3.5 is no longer the ultra-low-cost $0.40 option mentioned in the older draft. Its value comes from the combination of open-weight access, multimodal capability, long-context support, coding performance, and deployment flexibility. It can also be self-hosted on as few as four GPUs, which makes it relevant for organizations that want more control over infrastructure, privacy, and model customization.

DeepSeek V4 Flash is the stronger DeepSeek option to cover for cost-effective reasoning, coding, and high-volume AI workflows. It supports a 1 million token context window and uses a mixture-of-experts architecture with 284B total parameters and 13B active parameters. DeepSeek’s API documentation says older deepseek-chat and deepseek-reasoner model names now correspond to the non-thinking and thinking modes of DeepSeek V4 Flash, making it the more current option for production use.

Its main advantage is cost efficiency. DeepSeek V4 Flash is priced at $0.14 per million input tokens and $0.28 per million output tokens, making it useful for teams that need reasoning and coding support at scale without moving every task to a premium frontier model. DeepSeek V4 Pro can be added as the higher-capability option for more demanding reasoning, coding, and agent workflows.

What Are Some Open-Source Highlights?

The open-source and open-weight LLM ecosystem has become much stronger, especially for coding, agentic workflows, long-context reasoning, and self-hosted deployment. Teams can now compare models like Llama 4 Scout, Llama 4 Maverick, Qwen3-Coder, Mistral Large 3, Mistral Medium 3.5, and the GLM family for more control over cost, privacy, infrastructure, and customization.

GLM-4.7 can still be mentioned as a notable open-source coding model, but avoid using old LM Arena rankings unless they are refreshed. Instead, frame it around multilingual agentic coding, terminal-based tasks, coding-agent frameworks, and MIT-licensed deployment flexibility. If the article keeps Z.ai in the current ranking, briefly note that newer GLM-5 models should also be evaluated for current coding and long-horizon agent tasks.

Meta’s Llama 4 family remains one of the strongest open-weight options for teams that need long-context processing, multimodal capabilities, and more deployment control.

- Llama 4 Scout: Best suited for extremely long-context workflows. Its 10 million token context window can support large document sets, research libraries, legal files, or codebases in a single session.

- Llama 4 Maverick: A more balanced open-weight option for multimodal reasoning, coding, multilingual use cases, and enterprise AI applications. It is better suited for broader production use when extreme context length is not the main priority.

DeepSeek models remain strong options for teams that need cost-effective reasoning, coding, and long-context workflows. For current comparisons, DeepSeek V4 Flash is the better model to highlight because it supports a 1 million token context window and is designed for fast, economical inference. DeepSeek describes V4 Flash as the efficient option in the V4 family, while V4 Pro is positioned for stronger reasoning and coding performance.

DeepSeek V4 Flash is especially relevant for high-volume applications where premium frontier models would be too expensive for every request. DeepSeek R1 and V3.2 can still be mentioned as earlier reasoning models, but they should not be the main DeepSeek recommendation in the current ranking.

What Is The Strategic Value of Open Source?

Open-source and open-weight models give teams more control over how they build, deploy, and scale AI systems. They are especially useful for organizations that need stronger data control, custom model behavior, lower dependency on closed API providers, or more flexible deployment options.

Open-source models can provide:

- More control over data privacy through on-premises or private-cloud deployment

- Greater customization for fine-tuning, domain-specific workflows, and specialized use cases

- Less vendor dependency compared with relying only on closed model APIs

- Better cost control for high-volume applications once the infrastructure is already in place

However, self-hosting is not automatically cheaper or easier. It requires GPU infrastructure, model operations expertise, security controls, monitoring, and ongoing maintenance. For many teams, the best approach is a hybrid setup: use closed frontier models for the most complex tasks and open-source models for high-volume, specialized, or privacy-sensitive workflows.

Advanced Capabilities and Specialized Features

The 2026 LLM landscape is defined by larger context windows, stronger multimodal processing, agentic tool use, and more flexible deployment options. These capabilities are changing how teams use LLMs for document analysis, codebase review, research, automation, and enterprise knowledge workflows.

Long-context processing is one of the biggest improvements. Llama 4 Scout remains one of the strongest options for extreme context length, with a 10 million token context window for large document collections, research libraries, legal files, and software repositories. GPT-5.5, Claude Opus 4.7, Gemini 3.5 Flash, Grok 4.3, and Llama 4 Maverick also support large-context workflows, making them useful for multi-step reasoning across long inputs. OpenAI lists GPT-5.5 with a 1,050,000-token context window, Anthropic says Claude Opus 4.7 provides a 1M context window, Google lists Gemini 3.5 Flash with a 1M token context window, and xAI lists Grok 4.3 with 1M context.

Multimodal capabilities are also becoming standard across leading models. Gemini 3.5 Flash is the better Gemini model to cover here instead of Gemini 3 Pro, especially because Google positions it for agentic and coding tasks with strong long-context support. GPT-5.5, Claude Opus 4.7, Mistral Large 3, Llama 4 Maverick, and other current models should also be evaluated for text, image, document, and workflow-based inputs.

Real-time and tool-based workflows should now center on Grok 4.3 rather than Grok 4.1. Grok 4.3 supports configurable reasoning, strong agentic tool calling, and a 1 million token context window, making it more relevant for current-information workflows, research, trend monitoring, and applications that depend on external tools or live data. xAI also lists Grok 4.3 pricing at $1.25 input and $2.50 output per 1 million tokens.

Performance vs. Cost Trade-offs

The current LLM market presents clear trade-offs between performance, price, speed, context length, and deployment control. Premium models like GPT-5.5, GPT-5.5 Pro, and Claude Opus 4.7 are better suited for complex reasoning, coding, research, and enterprise workflows where accuracy matters more than cost. GPT-5.5 is priced at $5 input and $30 output per 1 million tokens, while GPT-5.5 Pro is priced at $30 input and $180 output. Claude Opus 4.7 is priced at $5 input and $25 output per 1 million tokens.

More cost-conscious options include Grok 4.3, Mistral Large 3, Mistral Medium 3.5, DeepSeek V4 Flash, and smaller GPT variants. These models may not always lead every benchmark, but they can be more practical for high-volume production use, internal tools, routing systems, and workflows where cost per request matters. Grok 4.3 is priced at $1.25 input and $2.50 output per 1 million tokens, while Mistral Medium 3.5 is listed at $1.50 input and $7.50 output.

Deployment Flexibility

Open-weight models like Llama 4 Scout, Llama 4 Maverick, Mistral Large 3, Mistral Medium 3.5, Qwen3-Coder, and the GLM family give organizations more control over customization, data handling, infrastructure, and long-term vendor dependency. They can be useful for privacy-sensitive workloads, domain-specific fine-tuning, and high-volume applications where full reliance on closed APIs may become expensive.

However, open-weight deployment is not automatically cheaper. Self-hosting requires GPU infrastructure, engineering resources, monitoring, security controls, and model operations expertise. For many companies, the best setup is hybrid: use premium closed models for the most complex reasoning tasks, and route simpler, high-volume, or privacy-sensitive workloads to open-weight or lower-cost models.

Human Preference vs. Price Analysis

Price does not always map directly to model quality. Some premium models justify higher costs for complex reasoning, coding, multimodal work, and long-context analysis. Others offer better value for repetitive workflows, internal automation, support tools, and cost-sensitive production systems.

A practical pricing breakdown looks like this:

- Premium tier: GPT-5.5 Pro, GPT-5.5, Claude Opus 4.7

Best for complex reasoning, advanced coding, research, and high-value enterprise workflows. - Value tier: Grok 4.3, Mistral Medium 3.5, Mistral Large 3, Claude Sonnet models

Best for teams that need strong performance without routing every request to the most expensive frontier model. - Budget and flexible deployment tier: DeepSeek V4 Flash, smaller GPT variants, Qwen3-Coder, Llama 4 Scout, Llama 4 Maverick, and GLM models

Best for high-volume tasks, self-hosted workflows, open-weight deployment, experimentation, and specialized use cases.

The main takeaway is that the best LLM is not always the highest-ranked or most expensive model. Teams should compare pricing, context window, latency, deployment model, reasoning depth, coding performance, and data requirements before choosing a production model.

Strategic Recommendations by Use Case

The best LLM depends on the workload, budget, deployment requirements, and level of reasoning needed. Use the recommendations below to compare leading models by practical business use case instead of relying on one overall ranking.

Enterprise Development and Coding

For organizations prioritizing coding capabilities, the strongest options to compare are Claude Opus 4.7, GPT-5.5, Qwen3-Coder, Mistral Medium 3.5, and GLM-4.7.

- Claude Opus 4.7: Strong fit for complex software engineering, codebase analysis, debugging, architectural planning, and long-running agent workflows.

- GPT-5.5: Strong OpenAI option for coding, reasoning, data analysis, and enterprise workflows, with a 1M context window and support for complex professional work.

- Qwen3-Coder: Strong open coding model for agentic development, tool use, browser use, and repository-level reasoning.

- Mistral Medium 3.5: Strong value-focused option for coding, instruction following, reasoning, and agentic workflows, with open-weight availability and 256k context.

- GLM-4.7: Useful open-source coding option for teams that need self-hosting, customization, and more deployment control.

For the most complex coding and agentic tasks, use a premium frontier model like Claude Opus 4.7 or GPT-5.5. For cost control, experimentation, or self-hosted development, compare Qwen3-Coder, Mistral Medium 3.5, and GLM-4.7.

Research and Analysis

For research-heavy and analytical workflows, compare GPT-5.5, Claude Opus 4.7, Gemini 3.5 Flash, Llama 4 Scout, and Grok 4.3.

- GPT-5.5: Best fit for complex research, long-context reasoning, data analysis, and professional knowledge work.

- Claude Opus 4.7: Strong for structured reasoning, document analysis, careful synthesis, and high-accuracy research tasks. Anthropic lists Opus 4.7 at $5 input and $25 output per 1M tokens.

- Gemini 3.5 Flash: Useful for multimodal research, fast long-context work, agentic workflows, and coding-related analysis.

- Llama 4 Scout: Strong option for extreme long-context workflows, especially large document collections, legal files, research archives, and code repositories.

- Grok 4.3: Strong fit for research that depends on current information, trend monitoring, external tools, and real-time workflows. xAI lists Grok 4.3 with 1M context and $1.25 input / $2.50 output pricing.

Cost-Conscious Applications

For cost-sensitive applications, do not route every request to the most expensive frontier model. Compare DeepSeek V4 Flash, Grok 4.3, Mistral Large 3, Mistral Medium 3.5, smaller GPT variants, and open-weight models like Llama 4 Scout, Llama 4 Maverick, Qwen3-Coder, and GLM-4.7.

DeepSeek V4 Flash and Grok 4.3 are strong candidates for lower-cost reasoning and high-volume workflows. Mistral Medium 3.5 is also worth considering for teams that need coding, reasoning, multimodal support, and open-weight flexibility without relying only on closed frontier models.

Real-Time and Dynamic Applications

For applications that depend on current information, live research, tool use, or trend monitoring, Grok 4 and Grok 4.1 were relevant earlier options, but Grok 4.3 is now the stronger model to cover. It supports configurable reasoning, function calling, structured outputs, and a 1M context window.

Gemini 3.5 Flash can also be included for fast multimodal and agentic workflows, especially where the task involves coding, multimodal inputs, or long-context processing rather than real-time information retrieval.

Reasoning Models are Becoming Standard

The LLM landscape in 2026 is defined by stronger reasoning, larger context windows, agentic workflows, and more flexible deployment options. Leading models such as GPT-5.5, Claude Opus 4.7, Gemini 3.5 Flash, Grok 4.3, and Mistral Medium 3.5 are no longer built only for text generation. They are increasingly designed to plan, reason, use tools, analyze long inputs, and support multi-step business workflows.

Context window expansion is still one of the biggest differentiators. Models like Llama 4 Scout, GPT-5.5, Claude Opus 4.7, Grok 4.3, Gemini 3.5 Flash, and Llama 4 Maverick make it easier to work with long documents, large research sets, legal files, and software repositories in a single workflow.

Open-weight models are also becoming more competitive. Llama 4, Mistral Large 3, Mistral Medium 3.5, Qwen3-Coder, GLM models, and DeepSeek options give teams more flexibility around deployment, customization, privacy, and cost control. This does not remove the need for closed frontier models, but it gives companies more ways to match each workload with the right model.

The main takeaway is that choosing the best LLM should not come down to one leaderboard position. Teams should compare reasoning depth, coding performance, context window, pricing, latency, deployment requirements, data sensitivity, and long-term infrastructure strategy before selecting a production model.

What Is The Methodology and Data Sources Behind our Research?

- This analysis combines multiple evaluation methods to compare the best LLMs in 2026 across performance, pricing, accessibility, and real-world use cases.

- LM Arena and human preference data: LM Arena helps show how models perform in blind human preference comparisons, where users choose between anonymous model responses. These rankings are useful for understanding broad response quality, but they can change quickly as new model versions are released.

- Automated benchmarks: Benchmarks such as GPQA Diamond, SWE-bench, AIME, MMLU, and coding-specific evaluations help measure more specific capabilities, including scientific reasoning, math performance, software engineering, instruction following, and task completion accuracy.

- Vendor documentation: Official documentation from providers such as OpenAI, Anthropic, Google, Meta, xAI, Mistral, DeepSeek, Alibaba/Qwen, Z.ai, and others was used to review model versions, context windows, pricing, access type, deployment options, and feature availability.

- Use-case fit: The final recommendations are based not only on leaderboard positions but also on reasoning depth, coding performance, multimodal capabilities, context window, pricing, latency, open-source availability, deployment flexibility, and enterprise readiness.

- The goal is to help readers choose the right LLM for a specific workload rather than assume that one model is the best option for every use case.

Last updated: May 22, 2026

.avif)