Reinforced learning is a type of machine learning algorithm that helps machines learn from experience

Reinforced learning is a type of artificial intelligence that helps machines learn from experience. The basic idea is to allow the machine to experience different situations and then use feedback to reinforce or punish certain behavior. This helps the machine to learn which actions lead to positive outcomes and which do not. Over time, the machine can become quite adept at choosing the correct actions in a given situation. Reinforcement learning has been used successfully in a variety of tasks, ranging from playing games to controlling robotic vehicles.

Reinforcement learning is a powerful machine learning technique that enables agents to learn from their environment by taking actions and observing the results. This type of learning has shown great promise in recent years, with many successful applications in areas such as robotics, gaming, and finance. In fact we can see its value play out with the introduction of ChatGPT for wide availability.

There are two main types of reinforcement learning: model-based and model-free. Model-based reinforcement learning focuses on building a model of the environment in order to make predictions about how proposed actions will affect the state of the environment. This approach can be very effective but can also be computationally intensive. Model-free reinforcement learning, on the other hand, does not require a model of the environment and instead relies on learning from experience. This can be a more efficient approach but may require more data in order to learn effectively.

In this tutorial, we will explore both model-based and model-free reinforcement learning methods and apply them to a simple problem. We will also discuss some of the challenges associated with reinforcement learning and ways to overcome them.

Please know this is part of our ongoing guide to Artificial Intelligence and Machine Learning designed for Business Users.

By the end of this post, you will be able to:

- Understand the basics of reinforcement learning

- Apply model-based and model-free reinforcement learning methods to a simple problem

- Discuss some of the challenges associated with reinforcement learning and ways to overcome them.

Separating Supervised Learning, Unsupervised Learning and Reinforcement Learning

While there are many different types of machine learning, the three most common are supervised learning, unsupervised learning and reinforcement learning. In supervised learning, the dataset used to train the model is labeled, meaning that the desired outcome is known. The supervised learning algorithms then learn from this labeled data in order to generalize to new, unseen data.

In contrast, unsupervised learning uses unlabeled data, which means that the desired outcome is not known. Supervised and unsupervised learning algorithms have powerful use cases which we will quickly cover here:

Supervised Learning

Supervised learning is the most popular type of machine learning. It is used when the desired outcome is known and there is a large amount of labeled data available. The most common type of supervised learning is regression, which is used to predict continuous values (such as prices). Another popular type of supervised learning is classification, which is used to predict discrete values (such as labels). Finally, there are also more advanced types of supervised learning, such as sequence prediction and time-series prediction.

Unsupervised Learning

Unsupervised learning is used when the desired outcome is not known and there is only a small amount of labeled data available. The most common type of unsupervised learning is clustering, which groups similar data points together. Another popular type of unsupervised learning is dimensionality reduction, which reduce the number of features in a dataset while still retaining the important information.

Reinforcement learning differs from both supervised and unsupervised learning in that it does not require a dataset. Instead, reinforcement learning agents learn from their environment by taking actions and observing the results. This type of learning has shown great promise in recent years, with many successful applications in areas such as robotics, gaming, and finance.

How did reinforced learning develop

Artificial intelligence (AI) is a branch of computer science that deals with the creation of intelligent machines that can work and react like humans. One of the main goals of AI is to create systems that can learn from data and improve their performance over time.

Machine learning is a subset of AI that deals with the development of algorithms that can learn from data. Reinforced learning is a type of machine learning where algorithms are "reinforced" with positive or negative feedback in order to improve their performance. This feedback can be in the form of awards (positive reinforcement) or punishments (negative reinforcement).

Reinforced Learning is inspired by the way animals learn through rewards and punishments

Reinforcement learning is inspired by the way animals learn through rewards and punishments. Animals learn to avoid pain and seek out pleasure, and this basic principle forms the basis for reinforcement learning. In reinforcement learning, algorithms are designed to maximize a goal or reward.

For example, a robot may be programmed to seek out objects that it is told are valuable. The more valuable the object, the greater the reward, and the more likely the algorithm is to repeat the behavior. In this way, reinforcement learning can be used to create artificial intelligence systems that exhibit intelligent behavior.

How reinforced learning works

Reinforced learning is a type of machine learning that helps agents learn how to best navigate their environment by trial and error. The reinforcement learning agent is rewarded for taking actions that lead to positive outcomes, while being penalized for taking actions that result in negative outcomes. Over time, the agent learns which actions are most likely to lead to positive outcomes, and adapts its behavior accordingly.

There are three main components to reinforced learning:

- Environment: This is the environment in which the reinforcement learning agent operates. It can be a physical environment, such as a robot moving through a room, or a virtual environment, such as a video game.

- Agent: This is the agent that is learning how to navigate the environment. The agent can be any type of entity, including a robot, software program, or even a human.

- Rewards: These are the positive and negative outcomes that the agent receives as it interacts with the environment. The rewards help the agent learn which actions are most likely to lead to positive outcomes.

The environment is used to provide the agent with a place to learn and interact. The agent is given rewards for taking actions that lead to positive outcomes, and penalized for taking actions that result in negative outcomes. Over time, the agent learns which actions are most likely to lead to positive outcomes, and adapts its behavior accordingly.

Applying model-based and model-free reinforcement learning methods.

Model-based reinforcement learning methods focus on trying to learn the underlying model of the problem, while model-free methods focus on learning from experience and trying to find a policy that will work well in general. In a simple problem, model-based methods would be more likely to converge to the correct solution faster, but in more complex problems where the model is harder to learn, model-free methods may be more successful.

Model-based methods

There are a few different model-based methods, but the most common ones are Q-learning and SARSA. Q-learning is an off-policy algorithm that learns by estimating the value of each state-action pair and then selecting the best action to take. SARSA is a on-policy algorithm that learns by taking actions according to the current policy and then updating the values of states based on the rewards received.

Both of these algorithms are value-based methods, meaning they focus on learning the long-term expected reward for each state-action pair.

Model-free methods

There are a few different model-free methods, but the most common ones are Monte Carlo methods and TD (Temporal Difference) learning. Monte Carlo methods learn by averaging the rewards received from episodes of play, while TD learning learns by making updates to the values of states based on the difference between the expected reward and the actual reward received. Both of these methods can be used with either on-policy or off-policy learning, and can be applied to either episodic or continuing tasks.

Monte Carlo methods are usually very sample efficient, meaning that they require relatively few episodes of play to learn the optimal policy. This is because they make use of all of the information from each episode, which can be very useful in episodic tasks where the goal is to find the optimal policy for a particular task. However, Monte Carlo methods can have problems with convergence if the episodes are not independent and identically distributed (i.e. if there is some sort of correlation between successive episodes).

TD learning is often more sample efficient than Monte Carlo methods, because it doesn't require complete episodes of play in order to learn the optimal policy. This is because TD learning updates the values of states based on the difference between the expected reward and the actual reward received, which means that it can learn from partial episodes of play. However, TD learning can sometimes have problems with stability, because the updates to the values of states can be very volatile.

So, which model-free learning method is best? It really depends on the particular task and on the amount of data available. Monte Carlo methods are usually more sample efficient than TD learning, but they can have problems with convergence if the episodes are not independent and identically distributed.

Deciding between model-based and model-free methods

There is no perfect answer for how to choose between using a model-based or model-free reinforcement learning method. In general, model-based methods are more likely to converge to the correct solution faster, but in more complex problems where the model is harder to learn, model-free methods may be more successful. Ultimately, it is important to experiment with both types of methods and see which one works better on the specific problem you are trying to solve.

Last, there are also policy-based methods, which focus on directly learning a policy that can be followed to get good results, but these are less common.

Creating a machine learning algorithm using the reinforcement learning approach

There are a few different ways to create a machine learning algorithm using the reinforcement learning approach.

Markov Decision Process

One way is to use a Markov decision process (MDP). MDPs are mathematical models that help define an environment and its possible states, transitions, and rewards. The model is based on the assumption that the agent is making decisions in a sequence of discrete time steps.

In each time step, the agent observes the current state of the environment and chooses an action from a set of available actions. The agent then receives a reward based on the chosen action, and the environment transitions to a new state. This process repeats until the agent reaches a terminal state.

Now that we've got a basic understanding of what MDPs are, let's take a closer look at how they work. To do that, we'll need to introduce some concepts and notation.

MDPs consist of four components: states, actions, rewards, and transitions. Let's start with states. States can be thought of as the different situations or configurations that the agent may find itself in.

For example, in a simple game like tic-tac-toe, there are only 3 x 3 = 9 possible states that the game can be in (e.g., X in the top-left corner and O in the center). In contrast, chess has an astronomically larger number of possible states, on the order of 10^40.

The second component is actions. Actions are the different choices that the agent can make at each time step. Going back to our tic-tac-toe example, each player has two actions available to them at each turn: placing their token in an empty square, or passing their turn.

Again, chess has many more actions available; for instance, a player can move their pawn forward one square, or their rook forward two squares. When choosing which action to take at each time step, the agent will try to maximize its reward; we'll talk more about rewards shortly.

The third component is rewards. Rewards are given to the agent for taking certain actions in certain states. They provide a way for us to tell the agent which actions lead to desirable outcomes and which don't.

Going back to our tic-tac-toe example once again, one possible reward function could give +1 point for every player who achieves three-in-a-row horizontally/vertically/diagonally, and +0 points otherwise. With this reward function, it's clear that the goal of the game is to achieve three-in-a-row; any other configuration will lead to 0 points being earned.

Note that it's important for rewards to be timely; if they're not given in close proximity to when the actions that led to them were taken, it will be difficult for the agent to learn from them effectively.

Finally, we come to transitions. Transitions model how states change over time as a result of agents' actions. They provide a way for us to keep track of what happens after an action is taken in a given state. Transitions are formally represented by transition probabilities; for example, if an action leads deterministically from State A to State B 100% of the time, we would say that the transition probability from A to B is 1 (or 100%).

If an action leads randomly to either State B or State C with equal probability, we would say the transition probabilities from A to B and A to C are both 0.5 (or 50%). We won't go into too much detail on transition probabilities here; just know that they exist and play an important role in MDPs!

MDPs are mathematical models used for decision making under uncertainty built around four key concepts: states, actions available at those states , potential rewards for taking those actions, and transitions made based on those actions.

Monte Carlo Simulations

Monte Carlo simulations involves using randomly generated data to approximate the expected value of a function. In mathematics, Monte Carlo methods are a class of computational algorithms that rely on repeated random sampling to obtain numerical results. Their essential idea is using randomly generated data to approximate the expected value of a function.

For example, if one wanted to calculate the area of a circle, one could use the Monte Carlo method by randomly generating points within a square circumscribed around the circle (or any other shape containing the circle) and then counting how many of those points fell within the circle itself. The more points that were generated, the more accurate the approximation would be.

In recent years, Monte Carlo methods have been used in various fields outside of mathematics, most notably in computer science and operations research. For example, in computer science, Monte Carlo tree search is a heuristic used in artificial intelligence for making better decisions. In operations research, Monte Carlo methods are used for optimization and for creating models of probability distributions.

One of the most popular applications of Monte Carlo simulations is in stock market analysis. Financial analysts will use these simulations to model different scenarios and gauge the riskiness of certain investment strategies. For example, they might use a Monte Carlo simulation to predict what would happen to stock prices if there was a sudden drop in oil prices.

One of the most common applications of Monte Carlo methods is in Reinforcement Learning (RL). RL algorithms are used in various fields such as self-driving cars, video games, and robotics. RL algorithms allow agents to learn from their environment by taking actions and receiving rewards (or punishments) based on those actions.

State Action Pairs and Monte Carlo

A state action pair is a combination of an action that is taken by an agent, and the resulting state that the agent ends up in. In many cases, the agent will receive a reward or punishment based on the state that they end up in. The goal of most RL algorithms is to learn what actions lead to the highest rewards, so that the agent can maximize its reward in the future.

State action pairs are important because they allow agents to learn from their environment and adapt their behavior accordingly. This is what makes RL algorithms so powerful and useful in a wide variety of applications.

For example, consider a simple game where an agent has to choose between two actions, A and B. If the agent chooses action A, they will receive a reward of 10 points. If they choose action B, they will receive a reward of 5 points. The goal of the agent is to maximize its rewards, so it would learn that choosing action A is the better option.

Now imagine that the game changes and the rewards for each action are reversed. Now, if the agent chooses action A, they will receive a reward of 5 points, and if they choose action B, they will receive a reward of 10 points. The agent would need to adapt its behavior and learn that choosing action B is now the better option. State action pairs allow agents to do this by learning from the rewards (or punishments) that they receive.

There are many different RL algorithms that use state action pairs in different ways. Some of the more popular algorithms include Q-learning, SARSA, and Monte Carlo methods. Each of these algorithms has its own strengths and weaknesses, so it is important to choose the right algorithm for the task at hand.

In general, state action pairs are a powerful tool that can be used to learn from environments and adapt behavior accordingly. They are an important part of many different RL algorithms, and can be used in a wide variety of applications.

Since a state action pair is a tuple consisting of an agent's current state and the action that the agent took in that state.

What is a tuple? A tuple is a data structure that consists of a sequence of elements. Tuples are similar to lists, but they are immutable, meaning that they cannot be modified after they are created. Tuples are typically used to store data that is not meant to be changed, such as the days of the week or a person's address.

The agents goal is to learn a policy, which is a mapping from states to actions, that will maximizing its expected reward. In order to do this, the agent needs to be able to sample from the environment in order to experience different states and receive feedback about the actions that it took. This is where Monte Carlo methods come in.

Monte Carlo methods can be used to estimate the value of a state action pair by sampling from the environment and calculating the average reward received for that state action pair. The more samples that are taken, the more accurate the

The Monte Carlo method can be used in RL by approximating the expected return of each state-action pair. This is done by randomly generating episodes (i.e., sequences of state-action pairs) and then calculating the sum of all future rewards for each state-action pair. The more episodes that are generated, the more accurate the approximation will be.

TD Learning

In machine learning, there are a variety of ways to update an algorithm. One popular method is called Temporal Difference (TD) learning. TD learning can be used in both supervised and unsupervised settings, but it's most commonly used in reinforcement learning.

Temporal Difference learning is a type of online machine learning. That means that the algorithm is constantly being updated as new data comes in. This is different from offline machine learning, where the algorithm is only updated once all the data has been collected.

In TD learning, the algorithm is updated incrementally after each observation. This makes it efficient for large datasets because it doesn't have to wait until all the data has been collected before starting to learn. It also makes TD learning suitable for real-time applications such as robotic control, where the learner needs to take action based on incomplete information.

TD learning works by using a value function to approximate the expected return of a given state-action pair. The value function is used to estimate the long-term reward that an agent will receive by taking a given action in a given state. The value function is updated after every timestep by using the Bellman equation. The Bellman equation computes the difference between the expected return and the actual return. This difference is called the TD error. The TD error is used to update the value function so that it better approximates the true expected return.

The value function can be represented either as a table or as a neural network. A table representation is used when the state space is small and discrete. A neural network representation is used when the state space is large and continuous. In either case, the goal of TD learning is to find the best way to approximate the expected return so that an agent can make good decisions.

There are two main types of TD learning: SARSA and Q-learning.

SARSA is a model-free algorithm that estimates the value of each state-action pair by calculating the expected future reward from that state-action pair. The algorithm then updates its estimates after each step by using the actual reward received at that step.

Q-learning is also a model-free algorithm, but it estimates the value of each state-action pair by calculating the maximum expected future reward from that state-action pair. The algorithm then updates its estimates after each step by using the actual reward received at that step.

Both of these algorithms are popular choices for RL because they are efficient and easy to implement. However, Q-learning is generally considered to be more effective than SARSA because it converges faster and is less likely to get stuck in local minima.

Q-learning is a model-free reinforcement learning algorithm that can be used for problems with discrete state and action spaces. In Q-learning, the goal is to learn the optimal action-value function, which tells us the expected return of taking a particular action in a given state.

The bellman equation is a mathematical formula used to optimize a decision process. It calculates the value of a decision, taking into account all the potential future outcomes. This value is then used to make the most optimal decision possible.

To do this, we first initialize the Q-values to some random values. Then, at each step in an episode, we update the Q-value for the current state and action using the Bellman equation:

Q(s_t,a_t) = R(s_t+1) + \gamma*max_{a}(Q(s_{t+1},a)) Where s_t represents the current state, a_t represents the current action, R(s_{t+1}) represents the reward at the next state, and *gamma* represents discount factor (which determines how much importance we give to future rewards).

The discount factor *gamma* can be thought of as a trade-off between exploration (finding new states) and exploitation (choosing actions that are known to be rewarding).

Artificial Neural Networks (ANNs)

Reinforcement learning can also be used with artificial neural networks (ANNs). ANNs are computational models that are inspired by the brain and can learn to perform tasks by example.

Like Markov and Monte Carlo, Neural Network models can be deeply complicated and deserve much more discussion than a few works here.

Neural networks computer systems that are modeled after the human brain. Just like the human brain, ANNs are composed of interconnected nodes, or neurons, that process information. These networks are designed to recognize patterns and make predictions based on data.

So how do ANNs work? Well, let's say you have a data set that you want to use to train an artificial neural network. This data set would be organized into what's called a training set and a testing set. The training set is used to train the ANN, while the testing set is used to evaluate the performance of the ANN.

To train the ANN, you would first need to initialize the weights of the connections between the nodes. The weights represent how important each connection is in terms of influencing the output of the ANN. After the weights have been initialized, the training set is fed into the network. For each row in the training set, the network calculates an output value. If this output value is not correct, the weights are adjusted and the process is repeated until the output values match the desired values in the training set.

Once the ANN has been trained, it can then be tested on data that it has never seen before (the testing set). The accuracy of predictions made by the ANN on this testing set will give you an idea of how well the network has learned to generalize from the training set.

ANNs can be used in reinforcement learning to approximate the value function. This is done by training the ANN on episodes (i.e., sequences of state-action pairs) and then calculating the average return for each state-action pair. The more episodes that are generated, the more accurate the approximation will be. Additionally, because ANNs can learn on their own, they can continue to improve the accuracy of their predictions over time.

Artificial neural networks are important because they offer a versatile tool for solving complex problems. In particular, they excel at tasks that are difficult for humans or traditional computer systems, such as image recognition and facial recognition. Additionally, because they are able to learn on their own, they can continue to get better at these tasks over time without needing any human intervention.

Deep reinforcement learning Algorithms

Deep reinforcement learning algorithms have been shown to be very successful in a wide range of control and decision-making tasks. These algorithms learn by trial-and-error, directly from raw sensory data, using deep neural networks.

Deep reinforcement learning is closely related to supervised learning, but with the added element of a "reward signal" that helps the algorithm to learn which actions lead to successful outcomes. deep reinforcement learning has been used to achieve impressive results in a variety of disciplines, including robotics, video game playing, and intelligent personal assistants.

There are many different deep reinforcement learning algorithms, but they all share the same core idea: the algorithm tries to learn the optimal way to map states (of the environment) to actions (that the agent should take), in order to maximize a long-term reward.

Recent advances in deep reinforcement learning algorithm have been driven by the availability of powerful GPUs and by the development of new deep neural network architectures, such as long short-term memory (LSTM) networks. deep reinforcement learning promises to continue to be a major force in artificial intelligence research in the years to come.

Benefits and Applications of reinforcement learning

There are many benefits to using reinforcement learning. First, it can be used to solve complex problems that are difficult for humans or traditional computer systems. Additionally, because it is able to learn on its own, it can continue to get better at these tasks over time without needing any human intervention. Finally, reinforcement learning is a versatile tool that can be applied to a variety of different domains.

Some potential applications of reinforcement learning include:

- Autonomous driving

- Fraud detection

- Predicting consumer behavior

- Optimizing supply chains

- Robot control

- Weather forecasting

- Natural language understanding

Fraud Detection

Reinforcement learning can be used in fraud detection by training a model to identify patterns that are indicative of fraud. This model can then be used to make predictions about whether new data points are likely to be fraudulent. If the model predicts that a data point is likely to be fraudulent, it can trigger an investigation. This would help to reduce the amount of fraud that goes undetected.

There is no one-size-fits-all answer to this question. Reinforcement learning can be used with a variety of different algorithms, including neural networks and Monte Carlo methods. The choice of algorithm will depend on the specific problem that you are trying to solve.

For instance, a Monte Carlo simulation could be used to generate a large number of possible scenarios (e.g., a customer's purchase history, a series of events leading up to a crime, etc.). These scenarios can then be analyzed to identify patterns that are indicative of fraud. This would help to reduce the amount of fraud that goes undetected.

A Markov decision process could be used to model the steps that a fraudster takes in order to commit fraud. This would help to identify patterns that are indicative of fraud. This would help to reduce the amount of fraud that goes undetected.

Robotic process automation (RPA)

Reinforcement learning can be used for robotic process automation in a number of ways. First, it can be used to train robots to perform tasks such as image recognition and facial recognition. Additionally, because it is able to learn on its own, it can continue to get better at these tasks over time without needing any human intervention. Finally, reinforcement learning is a versatile tool that can be applied to a variety of different domains, such as manufacturing, logistics, and healthcare.

Some potential applications of reinforcement learning for robotic process automation include:

- Automating repetitive tasks in manufacturing

- Improving the efficiency of package delivery robots

- Assisting surgeons with complex procedures

- Autonomous vehicles for transportation and delivery

Predictive maintenance

Reinforcement learning can be used for predictive maintenance in a number of ways. First, it can be used to train systems to predict when equipment is likely to fail. This information can then be used to schedule maintenance before the equipment fails, reducing downtime and ensuring that the equipment is always in good working order. Second, reinforcement learning can be used to optimize maintenance schedules. This can help reduce the overall cost of maintaining equipment by ensuring that only necessary maintenance is carried out, and that it is carried out at the most efficient times. Finally, reinforcement learning can be used to diagnose equipment problems. This can help identify the root cause of problems so that they can be fixed more quickly and effectively.

Predictive maintenance is a critical part of any manufacturing or production process. By using reinforcement learning, companies can ensure that their equipment is always running smoothly and efficiently, and that any problems are identified and fixed quickly. This can help to improve overall productivity and reduce costs.

Some potential applications of reinforcement learning for predictive maintenance include:

- Predicting when industrial machines will need repairs

- Forecasting when HVAC systems will need to be serviced

- Determining when medical equipment will need to be replaced

- Planning for traffic disruptions due to construction or accidents

As you can see, there are many benefits to using reinforcement learning. It can be used to solve complex problems, it gets better over time without human intervention, and it’s versatile enough to be applied in a variety of different domains. If you’re looking for ways optimize your business’s efficiency, then you should definitely give reinforcement learning a try.

Deep Learning

As we have covered, traditionally, reinforcement learning has been used to solve problems that are too difficult for traditional AI methods. However, recent advances in deep learning have allowed reinforcement learning to be used on a much more complex level.

Deep learning is a subset of machine learning that uses algorithms to model high-level abstractions in data. In other words, deep learning allows machines to automatically learn and improve from experience without being explicitly programmed. This is done by using a series of layers of processing nodes (neurons) where each layer is responsible for extracting a different representation of the data. The output of one layer becomes the input of the next layer until the final layer produces the desired output.

In fact, many experts believe that deep reinforcement learning is the future of artificial intelligence as it has the potential to create agents that can autonomously learn and adapt to changing environments.

Some recent work has suggested that pre-training a deep learning neural network on a related task can help when transferring it to a reinforcement learning problem. In fact, a lot of recent work in deep learning has focused on reinforcement learning and artificial intelligence. This is because the pre-trained network can provide a good starting point for the reinforced learning algorithm. In addition, the pre-trained network can be used to provide an initial state for the reinforced learning problem.

The Future of Reinforcement Learning

The future of reinforcement learning is shrouded in potential. As the technology continues to develop, it is likely that we will see more and more applications for it. Additionally, as reinforcement learning gets better at understanding and responding to complex situations, it is likely that we will see it used in a wider variety of domains.

Since, reinforcement learning is a type of machine learning that allows machines to learn from experience, it is a powerful tool that can be applied in a variety of different domains. It has the potential to revolutionize many industries.

Some possible future applications of reinforcement learning include:

- Developing more sophisticated autonomous vehicles

- Optimizing energy usage in buildings and factories

- Planning for traffic disruptions due to construction or accidents

- Forecasting stock prices and other economic indicators

- Assisting surgeons with complex procedures

- Predictive maintenance for a variety of equipment

As you can see, the future of reinforcement learning is very bright. It has the potential to revolutionize a wide variety of industries and to make our lives easier in a number of ways. We can only wait and see what the future holds for this amazing technology.

Quantum computing influence on Reinforcement Learning

Quantum computing is a relatively new technology that is just beginning to be explored. It has the potential to revolutionize many different fields, including reinforcement learning. Quantum computers are able to process information in a completely different way than traditional computers. This could allow them to solve problems that are currently too difficult for traditional computers to handle. Additionally, quantum computers could potentially make reinforcement learning even more efficient and powerful than it is currently.

There is still a lot of research that needs to be done in this area, but the potential for quantum computing to impact reinforcement learning is very exciting. It is likely that we will see more and more applications for quantum computing in the future, as the technology continues to develop.

Reinforcement learning is a type of machine learning that allows machines to learn from experience. It is a powerful tool that can be applied in a variety of different domains, and it has the potential to revolutionize many industries. In this blog post, we have looked at some of the ways reinforcement learning can be used for predictive maintenance, robotic process automation, and fraud detection. We have also seen some examples of how reinforcement learning is being used today to improve business efficiency.

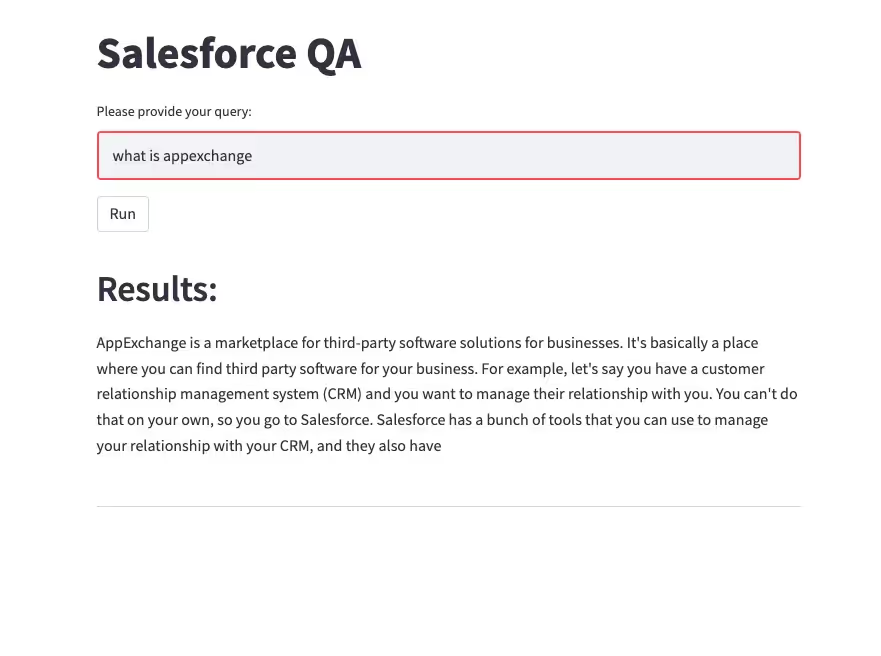

Try our AI-Powered Enterprise Search Solution.

.avif)